Inrodaction

In 2012, Knight Capital Group updated the software on their trading platform. The system started acting strange, making trades that weren’t planned for within minutes. That bug cost them $440 million and almost put the company out of business in the 45 minutes it took them to find the kill switch. This failure was not caused by a single “missed test.” The software’s release and validation processes were the source of the breakdown.

This example now serves as a case study of what occurs when actual production risks are not taken into account during testing and release procedures. The reality is that most bugs won’t cost you anywhere near that much, but they will cost you something: revenue loss, customer trust, and development time.

There are dozens of testing types out there, and everyone has different opinions. While some people vouch for test-driven development, others find it impractical. Some teams automate aggressively, while others still rely on manual testing where it makes sense.

Instead of adding to that debate, this guide focuses on what actually matters: which testing strategies and types are useful in practice, what problems they’re good at catching, and when they’re probably not worth the effort.

What Is Software Testing

Software testing is the process of checking whether a system behaves a certain way under real conditions. It’s not just about finding bugs or proving that something works once. Testing looks at how software handles everyday use, edge cases, mistakes, and changes over time. In terms of practical application, testing matches requirements with reality. Testing allows teams to verify that they’ve built the right solution and that it works as intended. Good testing looks at both the technical side and how real users interact with the system in practice.

Types of Software Testing

A good software product is built after each element is tested for reliability. A feature can work perfectly on its own and still fail once it’s connected to other parts of the system. A change that looks harmless can quietly break something that already worked. And when you ship broken software, it’s worse than not shipping at all. Software testing exists to solve these problems before your product is pushed live and problems turn into user-facing failures.

Since most software products are complex and heavily integrated, there are various types of software testing that teams use to test different elements of a product.

On the surface level, there are two types of testing: manual and automated. But it’s not as simple. Manual testing breaks further into multiple branches, and it goes on.

A brief overview looks like this:

Now, there are different ways in which experts break software testing types down into different categories and classifications, and different visual representations of testing type “tree” may exist out there. But the image above shows the core strategies that are common in most software.

A key thing to remember is that there is a significant overlap between all these testing types in practice. A lot of testing types can be automated and potentially fall under automation testing, and a lot of software testers consider following the testing pyramid, which divides all testing into three core strategies: unit tests, integration tests, and end-to-end tests.

Regardless of the approach you follow in practice, a clear overview of different types helps you make sense of and labelize what you’re actually doing.

Automation Testing

Automation testing involves using specialized tools and scripts to execute test cases automatically, reducing the need for human intervention. It is especially useful for repetitive tasks like regression testing, where the same tests must be run frequently after updates. By leveraging frameworks such as Selenium, Cypress, or Playwright, teams can build robust test suites that run quickly and consistently. One of its biggest advantages is speed, as automated tests can execute far faster than manual ones, especially at scale. It also improves accuracy by eliminating human error in repetitive validations and calculations. Continuous Integration and Continuous Deployment (CI/CD) pipelines often integrate automated tests to ensure faster feedback during development. However, automation requires an upfront investment in tools, scripting knowledge, and maintenance of test scripts as the application evolves. Despite this, it becomes highly cost-effective in the long run, particularly for large and complex projects with frequent releases.

Manual Testing

Manual testing is the process of evaluating software by executing test cases without the use of automation tools. Testers interact with the application as end users would, checking for bugs, usability issues, and overall functionality. It is particularly valuable in exploratory testing, where human intuition and creativity help uncover unexpected issues. Unlike automation, manual testing requires no scripting knowledge, making it more accessible for beginners or non-technical stakeholders. It also allows testers to assess aspects like user experience, visual design, and ease of use, which are difficult to automate. However, manual testing can be time-consuming and prone to human error, especially when dealing with repetitive tasks. Despite these limitations, it remains essential for scenarios where human judgment and flexibility are required.

Manual testing is further broken down into the three types of testing:

- White Box Testing: White box testing focuses on the application to verify how the code works. It checks logic paths, conditions, loops, and error handling to ensure all critical branches are exercised. These tests help uncover hidden issues like unreachable code, incorrect assumptions, or unhandled scenarios that may never surface through user-facing tests alone.

- Black Box Testing: Black box testing focuses on what the system does, not how it’s built. Testers interact with the application by providing inputs and checking outputs against expected results, without any knowledge of the internal code. This approach mirrors real user behavior and is especially useful for validating requirements, workflows, and edge cases that developers may not anticipate.

Learn in detail about black box testing vs white box testing here.

- Grey Box Testing: Grey box testing is a software testing approach that combines elements of both black-box and white-box testing. In this method, the tester has partial knowledge of the internal workings of the application but does not have full access to the source code. This limited insight allows testers to design more informed and effective test cases compared to purely black-box testing.

Black box testing is performed in two ways or two stages: functional testing and non-functional testing.

Functional Testing and Its Types

Functional testing is a type of software testing that verifies whether an application behaves according to its specified requirements. Instead of looking at how the code is written internally, it focuses on what the system is supposed to do from a user or business perspective. Testers provide inputs, execute specific actions, and then check if the outputs match the expected results defined in requirements or user stories. For example, if a login feature is being tested, functional testing ensures that valid credentials allow access and invalid ones are rejected correctly. It essentially answers the question: “Does this feature work as intended?” This makes it a core part of quality assurance across almost every software project. Because of its user-focused nature, it is often aligned closely with real-world use cases.

Functional testing includes several levels, such as unit testing, integration testing, system testing, and end-to-end testing.

- Unit Testing: Unit tests are used to break down an application into the smallest level of testable pieces, such as a function or a method. Each unit will then be run in isolation in order to make sure the unit has the expected output. Unit tests are very quick-running tests and are used in order to ensure a stable application.

- System Testing: System testing validates the whole application in an environment that closely resembles production. It verifies that all components work together as expected if the system meets both functional and non-functional requirements. This testing helps catch issues that can only appear when the full system is in place.

- End-to-end (E2E) Testing: End-to-end (E2E) testing is a type of testing that verifies an entire application workflow from start to finish, just as a real user would experience it. Instead of testing individual components in isolation, it checks whether all parts of the system work together correctly. E2E testing is typically slower, more complex, and more expensive compared to unit or integration testing. These tests often require a fully deployed environment and can be sensitive to small changes, making them harder to maintain.

Learn more about unit testing, integration testing, and end-to-end testing in the testing pyramid blog.

Additional Funtional Testing Types (Supporting Testing Layers)

Some testing types are not necessarily categorized or classified as functional testing, but they have testing layers that support or include functional elements in practice.

- Smoke Testing: Smoke testing is not a functional testing type but a test execution level / build verification type (BVT). It’s a broad, high-level test that includes functional checks to ensure the critical functionalities of a new build are stable.

- Sanity Testing: Sanity testing is a narrow, deep check performed on a stable build to verify that specific bug fixes or code changes work correctly. It can also be classified as a narrowed regression test.

- API Testing: API testing is functional testing at the service layer. It verifies request/response behavior, business logic in APIs, and data correctness

- Database Testing: It’s also largely functional (backend functional validation) at a test data layer and checks data integrity, CRUD operations, stored procedures / queries, and data consistency with UI/API.

Non-Functional Testing and Its Types

Non-functional testing checks how well a system works, rather than whether specific features work. While functional testing asks, “Does the login button work?”, non-functional testing asks things like, “How fast does it respond?,” “Can it handle 10,000 users?” or “Is it secure and easy to use?” It focuses on qualities such as performance, usability, reliability, scalability, and security. It’s especially critical for real-world readiness, where user experience and system stability matter just as much as functionality.

Non-functional testing includes several types, such as performance testing, usability testing, compatibility testing, and security testing.

- Performance Testing: Performance testing assesses how the system responds to varying loads. As usage rises, it considers response time, resource consumption, and overall stability. These tests prevent failures during demand spikes and help teams understand system limitations. Three common types of performance tests are load testing, stress testing, and stability testing.

- Usability Testing: Usability testing evaluates how easy and intuitive a product is for real users to interact with. It focuses on user experience by observing how people navigate the interface, complete tasks, and respond to the design. Testers often look for issues like confusing layouts, unclear instructions, or unnecessary steps that slow users down.

- Security Testing: Security testing focuses on protecting the system and its data from threats. It finds defects like exposed data, exploitable inputs, and poor access controls. This type of testing is critical for reducing risk and ensuring the application can withstand real-world attacks.

- Compatibility Testing: Compatibility testing ensures an application works correctly across different environments, such as operating systems, browsers, devices, networks, and hardware configurations. Its main goal is to verify that the software delivers a consistent user experience regardless of where or how it is accessed.

Other Types of Software Testing

There are other types of testing that are not commonly included in charts because they can occur at different levels and intervals, depending on how your product is developed and shipped. These testing types include:

Acceptance Testing

Acceptance testing determines if the software is ready to be delivered to the users. It verifies the system from a business and a user perspective, and it often involves stakeholders and product owners. The focus is on confidence, verifying that the software meets expectations and supports real-world use.

There are various types of acceptance testing, which heavily vary on specific project needs and requirements.

- User Acceptance Testing (UAT): User acceptance testing is performed by the end users or clients to ensure the software meets real-world business needs and works as expected in practical scenarios. It focuses on usability and business workflows rather than technical issues.

- Business Acceptance Testing (BAT): This type is performed to verify whether the software aligns with business goals, processes, and requirements. It is usually carried out by business analysts or stakeholders.

- Contract Acceptance Testing (CAT): This ensures the software meets the conditions and requirements specified in a contract between the client and the development team. It is important in outsourced or vendor-based projects.

- Regulatory Acceptance Testing (RAT): This checks whether the software complies with legal, industry, or government regulations. It is critical in sectors like healthcare, finance, and aviation.

- Alpha Testing: Conducted internally by the development or QA team before releasing the product to external users. It helps catch major bugs early.

- Beta Testing: Done by a limited group of real users outside the organization in a real-world environment. It helps gather feedback before the final release

Learn the difference alpha testing and beta testing in detail here.

Regression Testing

Regression testing verifies that the recent changes have not caused any new issues with existing functionality. As software evolves, even small updates can have unintended side effects. Regression testing acts as a safety net, helping teams move faster without constantly rechecking the same areas manually. It occurs whenever there’s a new change or update in the software product.

System Integration Testing

System integration testing (SIT) is a higher-level form of integration testing where multiple integrated systems or external systems are tested together as a complete ecosystem. It ensures that different systems, such as third-party services, databases, or external applications, work seamlessly with the main system. SIT focuses on end-to-end data flow and interaction between multiple systems rather than just internal modules. It is commonly used in enterprise software testing environments where software depends on multiple interconnected systems.

Software Testing Strategies and Approaches

While testing types state what you test, a testing strategy explains how you approach testing overall. It is the thinking behind the work. A testing strategy helps the team in deciding where they need to focus more, what risks matter most, and which testing types would actually make sense for the product and stage they’re in.

The majority of teams don’t just use a single strategy. Rather, they combine multiple strategies based on the system, the risks, and how the software is built and released.

Below are some of the most common testing strategies and how they’re typically applied in practice.

Exploratory Testing Approach

Exploratory testing is not a structured testing type, but primarily an approach that emphasizes personal freedom and continuous learning to improve test quality. It involves simultaneous learning, test design, and execution rather than following predefined scripts. It is best described as a flexible, human-centric approach, often structured into sessions instead of test cases.

Ad Hoc Testing Approach

Ad hoc testing is considered an informal type or method of software testing. It is an unplanned, unstructured, and random approach aimed at finding defects quickly by breaking the system without using documented test cases, often relying on the tester’s intuition and experience.

Static Testing Strategy

Static testing is a type of software testing approach where the application is tested without executing the code. Instead of running the program, testers review and analyze documents, requirements, design specifications, or source code to find errors early. It focuses on preventing defects rather than detecting them during execution.

Common techniques include reviews, walkthroughs, inspections, and static code analysis. Static testing is usually performed in the early stages of the software testing lifecycle, even before the software is built. It helps identify issues like unclear requirements, coding standards violations, and design flaws at a very low cost.

Dynamic Testing Strategy

Dynamic testing is a type of software testing where the application is executed and tested by running the code. It involves providing inputs to the system and validating the outputs against expected results. This type of testing is used to find runtime errors, performance issues, and functional defects. Dynamic testing includes all most common testing types like unit testing, integration testing, system testing, and acceptance testing. It is performed after the code is developed and focuses on verifying actual system behavior. Unlike static testing, it ensures the software works correctly in real execution environments.

Structural Testing Strategy

A structural testing strategy focuses on the internal workings of the software. It looks at how the system is built rather than how it appears to users. This strategy is tied to the codebase, and it is usually applied in early stages and continuously during the development phase. Unit testing, code-level integration testing, and white box testing are examples of a structural testing strategy. These test types validate logic paths, data handling, error conditions, and interactions between internal components.

Behavioral Testing Strategy

A behavioral testing strategy is a software testing approach that focuses on verifying how a system behaves from the perspective of the end user or business requirements. Instead of looking at internal code structure, it verifies that the software delivers the expected outputs when users interact with it. It is commonly applied using techniques like black box testing, system testing, acceptance testing, and regression testing. Behavioral testing is especially important because it validates whether the software actually solves the problem it was built for.

Front-End Testing Approach

Front-end testing focuses on the user interface (UI) and everything users directly interact with. It checks things like:

- Layout and design consistency

- Buttons, forms, and navigation

- Browser and device compatibility

- User interactions (clicks, inputs, validations)

- UI responsiveness

It overlaps heavily with functional testing, usability testing, and compatibility testing. Front-end testing is more of a UI-focused testing scope, not a standalone formal category.

Back-End Testing Approach

Back-end testing focuses on the server-side logic and data processing that users don’t see. It checks things like:

- APIs and services

- Database operations

- Business logic

- Data integrity

- Server responses and performance

It overlaps with API testing, database testing, integration testing, security testing. In other words, back-end testing is a system-layer testing focus, not a separate testing type.

Using TestFiesta for Software Testing

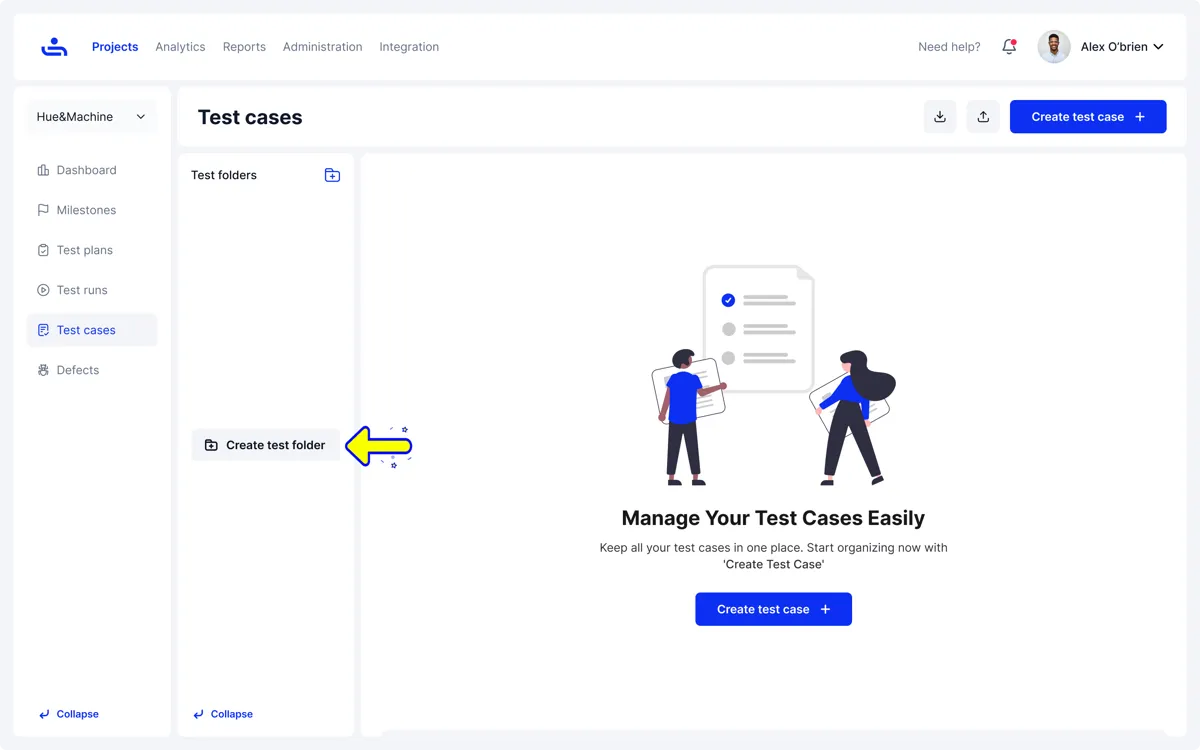

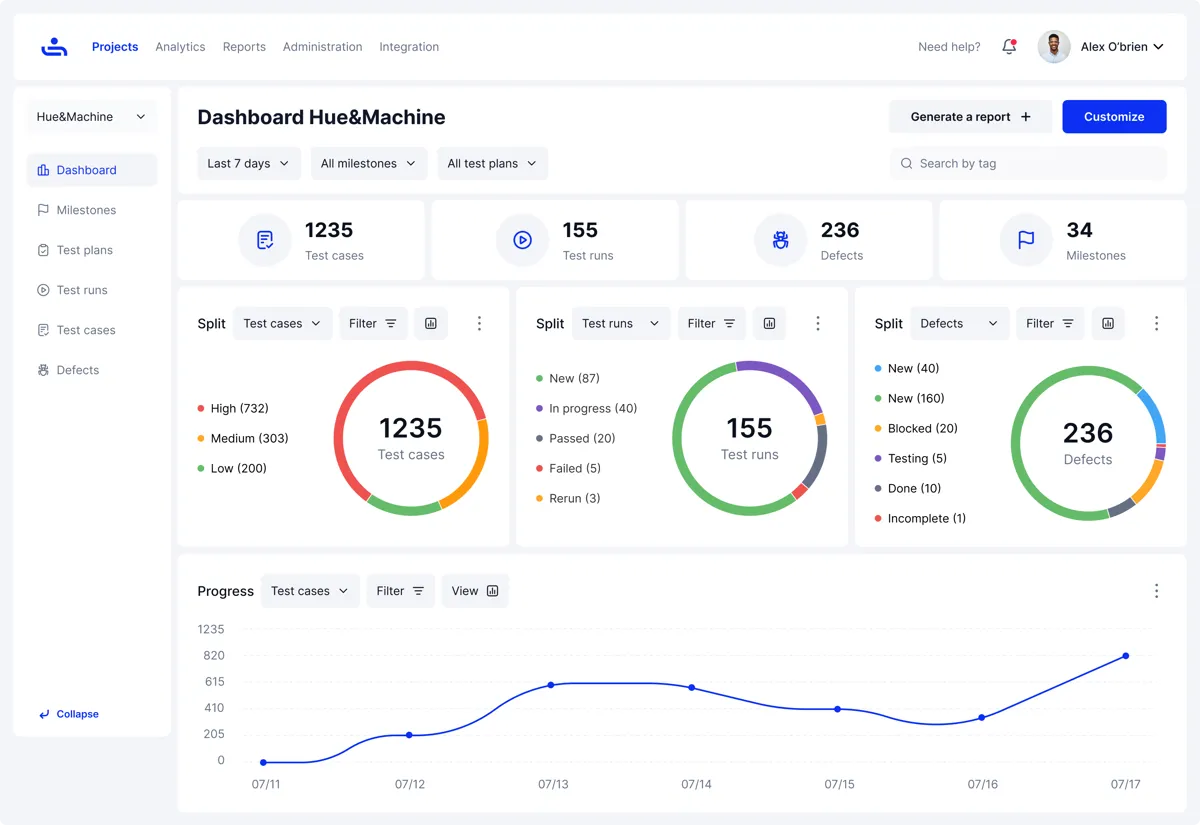

Testing strategies only work if the tools supporting them don’t get in the way. That’s where TestFiesta fits in.

TestFiesta is a flexible test case management platform designed to support different testing strategies without forcing teams into a rigid structure or workflow. Whether you’re focusing on behavioral testing, structural coverage, or a mix of approaches, TestFiesta lets teams organize test cases in a way that reflects how they actually work.

It supports truly flexible test management with features like tags, reusable steps, native defect tracking, and custom fields make it easier to adapt testing as products evolve. Instead of rebuilding test suites and test plans every time priorities shift, teams can adjust how tests are grouped, executed, and reviewed. This flexibility supports both fast-moving teams and those working on more complex systems, without adding unnecessary overhead.

Conclusion

Software testing doesn’t have a universal formula. The most effective testing strategies are shaped by real constraints, product complexity, team skills, release pace, and risk.

Understanding the different types of testing and how they fit into broader strategies helps teams make better decisions about where to focus their effort.

When testing is intentional and aligned with how software is built and used, it becomes a strength rather than a bottleneck.

FAQs

What is a test strategy in software testing?

A test strategy is a high-level plan that explains how testing will be approached for a product. It outlines what will be tested first, where effort should be concentrated, and how different types of testing fit together. Instead of listing individual test cases, it focuses on priorities, risks, and practical constraints.

What is the 80/20 rule in testing?

The 80/20 rule in testing suggests that a large portion of issues usually come from a small part of the system. In practice, this means a few features, workflows, or components tend to cause most problems. Teams use this idea to focus their testing efforts on high-risk or high-usage areas instead of trying to test everything with equal measure.

What are some common software testing strategies?

Common testing strategies functional testing, white-box testing, black-box testing, system integration testing, user acceptance testing, smoke testing, and behavioral testing. Most teams don’t rely on just one strategy. They combine several approaches based on the type of product they’re building and how it’s delivered.

Which software testing strategy is good for my product?

The best strategy depends on your product’s risk, complexity, and pace of change. A fast-moving product with frequent releases may need strong regression and automation support, while a simpler or early-stage product might benefit more from focused manual and exploratory testing. Team skills, timelines, and user impact also matter. The right strategy is the one that helps you catch the most important problems without slowing development down.