Introduction

When people talk about software testing, one of the most common distinctions you’ll hear is black box testing vs white box testing. One approach focuses on testing software from the outside, while the other examines how the system works internally. But in practice, it’s not that simple. The relationship between the two is more nuanced than most think.

Both approaches exist to answer the same fundamental question: Does the software work as expected? The difference lies in how testers approach the problem. Think of it like inspecting a car: one person checks if it drives smoothly, while another pops the hood to inspect the engine.

In this guide, we’ll break down the key differences between black box and white box testing, explore when each approach works best, and explain how they complement each other in real-world testing strategies.

What Is Black Box Testing

Picture yourself as a user. Clicking buttons, filling out forms, watching what happens next. That’s black box testing in a nutshell. You’re evaluating an application’s functionality without examining its internal code, structure, or implementation. The focus is entirely on inputs and outputs. You provide input to the product and observe its response. If the output matches the expected result based on requirements, the test passes.

Why is this valuable? Because it simulates how real users actually interact with software. This makes it especially useful for validating user-facing features and workflows. Testers rely on requirements, specifications, and user stories to design their test cases. And here’s a key advantage: since black box testing doesn’t require programming knowledge, it can be performed by QA engineers, testers, or even stakeholders in some cases.

Types and Techniques of Black Box Testing

Black box testing encompasses several testing types and techniques, each designed to validate software behavior from an external perspective.

- Functional Testing: Does each application feature work as specified? Functional testing answers that question by having testers provide inputs and check whether the outputs match the expected results. It’s about verifying the “happy path” and expected user workflows.

- Non-Functional Testing: What about performance, reliability, scalability, and response time? These elements aren’t tied to specific features but absolutely impact user experience. Non-functional testing evaluates aspects like how fast the system responds under load, whether it remains stable, and how well it scales.

- Regression Testing: When you release an update or fix a bug, something unexpected can break. Regression testing prevents this by re-running existing test cases to confirm that recent changes haven’t introduced new defects. It’s your safety net after deployments. (This is especially important in continuous development cycles, similar to validating core functionality with smoke testing.)

- UI Testing: Users interact with buttons, menus, forms, and layouts. UI testing ensures these visual elements behave as expected and remain consistently functional across interactions.

- Usability Testing: Usability testing uncovers whether the app feels intuitive and whether users can easily navigate it. Testers observe how actual users interact with the software and identify confusion points and difficulty areas, directly improving the user experience and reducing the learning curve.

- Ad Hoc Testing: Sometimes the best bugs are found by exploration rather than planning. Ad hoc testing is an informal approach that explores the application for unexpected defects without predefined test cases. The goal is to discover bugs through spontaneous testing and creative exploration without structured requirements.

- Compatibility Testing: Your app needs to work across different devices, operating systems, browsers, and environments. Compatibility testing verifies exactly that, ensuring users receive a consistent experience whether they’re on Chrome, Safari, Android, or iOS.

- Penetration Testing: Penetration testing simulates cyberattacks to identify security vulnerabilities. Security testers attempt to exploit weaknesses, providing teams with the information needed to strengthen defenses before real attackers find these gaps.

- Security Testing: Beyond penetration testing, security testing ensures that the application protects data and prevents unauthorized access. It verifies mechanisms like authentication, authorization, encryption, and data protection. The objective is to identify and fix potential security risks.

- Localization and Internationalization Testing: If your product was made in the US, would it work for users in Japan? Germany? Brazil? This testing verifies that applications function correctly across different languages, regions, and cultural settings. It checks translations, date/time formatting, currency displays, and cultural nuances.

What Is White Box Testing

Now flip the perspective. Instead of clicking buttons like a user, imagine being the developer. You’re inside the system, analyzing the internal structure, logic, and code of an application to verify it works correctly. That’s white box testing.

Unlike black box testing — which focuses only on inputs and outputs — white box testing requires understanding how the software is implemented. Testers analyze the code, control flow, and data paths to ensure every part of the program behaves as expected.

Who does this? Developers or testers with programming knowledge, because it involves reviewing and testing the application’s internal logic. By inspecting how code executes, white box testing uncovers issues that remain invisible from the outside: logical errors, security vulnerabilities, inefficient code paths, and hidden defects.

Types and Techniques of White Box Testing

White box testing employs several techniques to analyze internal logic and code structure.

- Unit Testing: Starting small, unit testing verifies the smallest components of a program, such as functions, methods, and individual classes. Each unit gets tested independently to ensure it performs its intended task correctly. Developers typically write these tests during development.

- Static Code Analysis: You don’t always need to run code to find problems. Static code analysis is like a spell-checker for code. In this analysis, testers examine the source code without executing the program. Tools and manual reviews detect coding issues like syntax errors, security vulnerabilities, and code standard violations.

- Dynamic Code Analysis: Some issues only appear when the code runs. Dynamic code analysis evaluates software behavior while it executes. Testers observe how the code runs and check for runtime errors, memory leaks, and performance issues that static analysis might miss.

- Statement Coverage: Did your tests actually exercise every line of code? Statement coverage measures whether each line has been executed during testing. The goal is to ensure every statement gets tested at least once, helping identify untested code paths that might harbor hidden defects.

- Branch Testing: Code expands into branches when decisions happen, including if statements, else clauses, and switch cases. Branch testing verifies that every possible branch is executed. This includes testing both true and false outcomes of conditional statements, ensuring all decision paths work correctly.

- Path Testing: Beyond branches, entire execution paths also matter. Path testing involves executing different possible paths through the program’s control flow. Testers analyze the application logic to ensure all meaningful execution paths are covered, not just individual branches.

- Loop Testing: Loops repeat operations, and loop testing validates how loops behave across different iteration counts, including for loops, while loops, and do-while loops. In other words, it includes testing boundary conditions: what happens when a loop runs zero times, once, and many times?

Key Differences in Black Box and White Box Testing

Here’s how these two approaches compare:

Key Similarities in Black Box and White Box Testing

Different as they seem, black box and white box testing share fundamental ground. Both exist to ensure the application functions correctly and reliably. Both improve software quality and play important roles in comprehensive testing strategies. Here’s where they overlap:

- Improve Software Quality: The primary goal of both approaches is to identify defects and ensure the application behaves as expected. They help teams deliver reliable and stable software. Neither exists in isolation; they’re two perspectives on the same mission.

- Part of a Broader Testing Strategy: Black box and white box testing rarely work alone. Modern teams use them together within a comprehensive testing strategy. Combining both perspectives helps teams detect issues at both the functional and code levels.

- Require Thoughtful Test Design: Whether you’re testing from outside or inside, effective testing requires carefully designed test scenarios and test cases. Proper planning ensures meaningful coverage and accurate results. Sloppy test design wastes time regardless of the approach.

- Catch Different Defects: Black box testing finds missing functionality and usability problems. White box testing catches logical errors and security vulnerabilities. Each method contributes unique insights during development, and detecting defects early reduces the cost and effort required to fix them later.

- Support Automation: Modern testing tools enable both approaches to be automated. Teams can run automated black box tests for regression testing and automated unit tests (white box) in CI/CD pipelines. Automation helps teams run tests frequently and maintain quality throughout continuous development cycles.

- Inform Release Decisions: The insights gained from these testing methods help teams evaluate product readiness. Test results provide valuable information for deciding whether software is ready for deployment. Leadership needs both perspectives before green-lighting a release.

Real-World Applications of Black Box Testing

Black box testing is widely used across industries because it focuses on validating software behavior from the user’s perspective. By testing inputs and outputs without examining internal code, teams ensure applications function correctly in real-world scenarios.

Web Application Testing

Testing a website? Start with black box testing. Testers interact with features like login forms, search functions, checkout processes, and navigation menus to ensure they work correctly for users. This confirms that the application behaves as expected across different scenarios.

Mobile Application Testing

Mobile apps depend heavily on black box testing to validate user interactions, gestures, and interface behavior. Testers check features like registration, notifications, payment flows, and app navigation without analyzing underlying code. This ensures the app delivers a smooth and reliable user experience.

API Testing

APIs power modern applications, and black box testing validates APIs by sending requests and analyzing responses. Testers verify whether the API returns correct data, proper status codes, and meaningful error messages based on different inputs. This ensures backend services communicate properly with applications and external systems.

E-commerce Platform Testing

Online stores require extensive black box testing to ensure critical user journeys work properly. Testers validate processes like browsing products, adding items to a cart, applying discounts, and completing payments. One glitch in checkout? That’s lost revenue. Black box testing prevents these costly mistakes.

Banking and Financial Applications

Financial systems can’t afford failures. Black box testing verifies transaction workflows and account management features. Testers validate operations like fund transfers, balance checks, and payment processing to ensure they produce correct results. This is essential for maintaining accuracy and trust in financial applications.

Enterprise Software Testing

Large enterprise applications like CRM or ERP systems require extensive black box testing to validate business workflows. Testers verify that processes like data entry, reporting, and system integrations function correctly from the user’s perspective. When a company relies on your software for daily operations, reliability isn’t optional.

Learn how to scale testing across enterprise systems in our enterprise software testing guide.

Real-World Applications of White Box Testing

White box testing validates the internal logic and structure of software systems. By examining the underlying code, developers and testers ensure algorithms, control flows, and data handling processes function correctly. This approach proves especially valuable in complex applications where reliability, performance, and security are essential.

Unit Testing in Software Development

White box testing begins early, during unit testing, where developers verify individual components. Developers examine the internal logic of functions, classes, or modules to ensure they produce correct results under different conditions. This catches logical errors early in the development process, before code reaches integration testing.

Code Optimization and Performance Improvement

Want faster, cleaner code? Developers use white box testing to analyze execution efficiency. By reviewing loops, conditions, and execution paths, they identify inefficient operations or redundant logic. This improves overall performance and maintainability.

Security and Vulnerability Detection

White box testing uncovers security weaknesses within the code itself. Testers analyze authentication mechanisms, data handling, and input validation to detect vulnerabilities that attackers might exploit. This is particularly important for applications handling sensitive data.

Database and Data Flow Validation

Applications handling heavy data processing benefit greatly from white box testing. Testers analyze queries, data transformations, and validation logic to ensure information is processed accurately.

Testing Complex Algorithms and Business Logic

Applications relying on advanced algorithms, such as financial calculations, recommendation engines, and machine learning models, need white box testing. Testers evaluate the internal logic to ensure algorithms produce correct results in all scenarios. Mathematical errors in a pricing algorithm affect thousands of users.

Continuous Integration and Automated Testing Pipelines

White box testing integrates directly into automated testing pipelines within CI/CD workflows. Developers run unit tests and code analysis tools whenever new code is added to the repository. This maintains code quality and detects issues before they reach production. Every commit triggers validation.

How Does TestFiesta Support Black Box Testing vs. White Box Testing

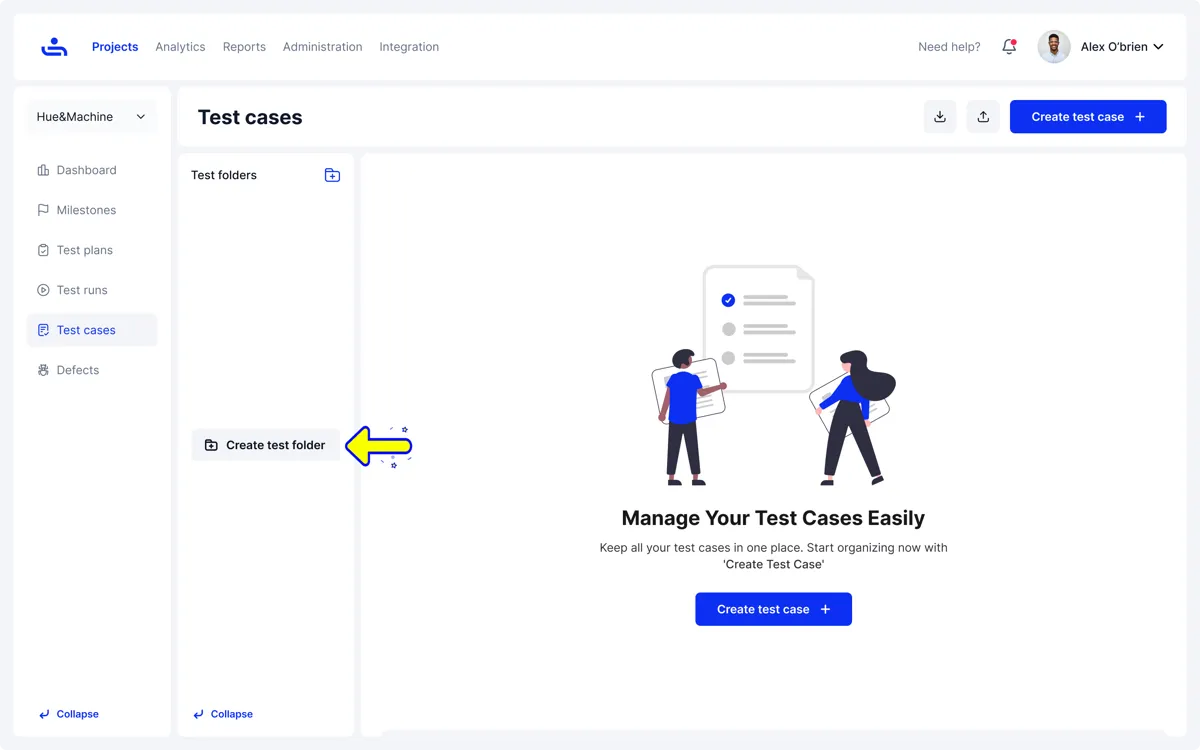

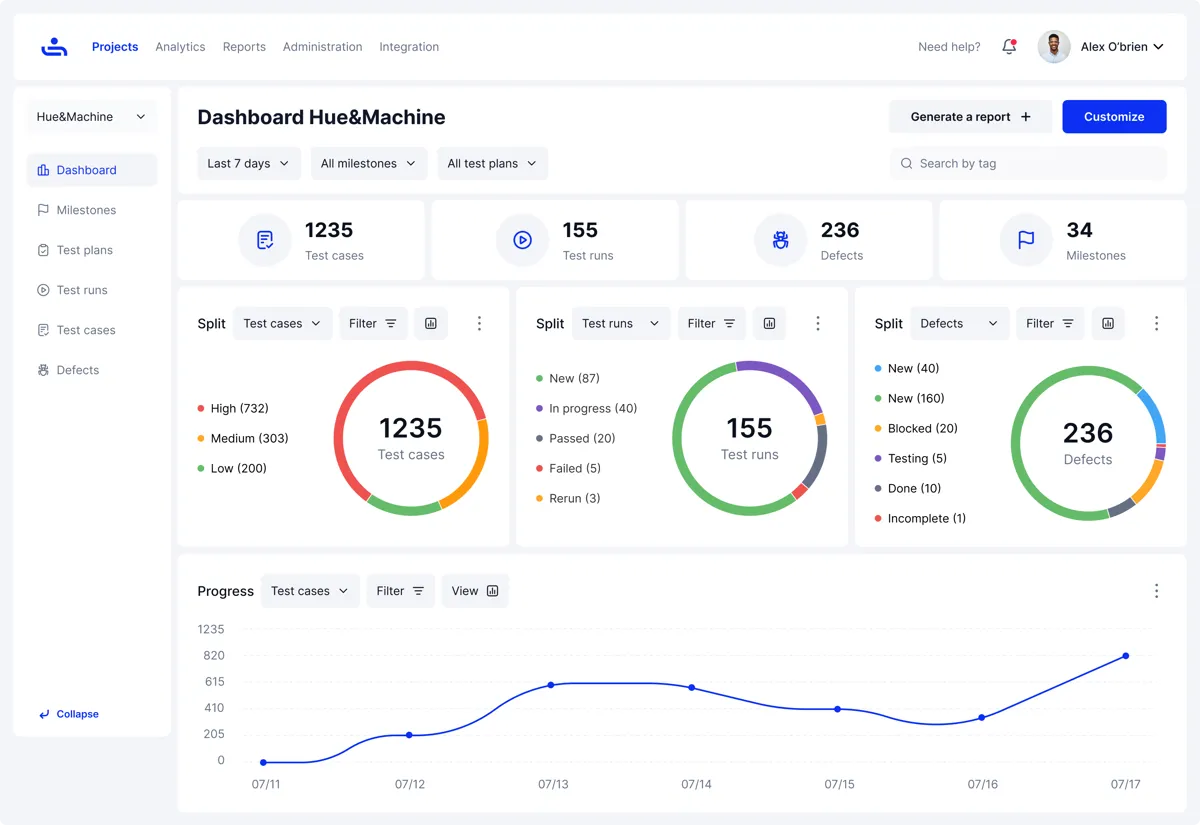

Modern testing teams use both approaches to evaluate software from different perspectives. A flexible test management platform helps organize, track, and execute these different testing approaches within a single workflow. TestFiesta supports both methods by providing tools for test case creation, execution tracking, automation integration, and reporting. This allows QA teams and developers to manage all testing activities in one place.

Supporting Black Box Testing

Black box testing focuses on validating how software behaves from the user’s perspective. TestFiesta helps teams manage these tests by organizing functional and user-driven scenarios clearly and efficiently.

Requirement-Based Test Case Management: TestFiesta enables requirement-based test case management. QA teams create test cases directly from user stories, acceptance criteria, or product requirements. This approach makes it easier to verify that features behave correctly without needing access to the underlying code. Your test cases align with business requirements, not implementation details.

Reusable Test Steps for Common Workflows: Shared steps in TestFiesta allow teams to reuse common actions, such as login flows, checkout processes, and data entry patterns, across multiple tests. Updating the shared step automatically updates all related tests, reducing maintenance effort. You write the logic once; it scales across dozens of tests.

Structured Test Suites and Execution Tracking: TestFiesta lets testers organize functional tests into suites, track execution results, and monitor pass/fail rates. This helps teams quickly assess whether the application behaves as expected. See at a glance: which features pass, which fail, and where gaps exist.

Clear Reporting and Visibility: Custom dashboards and reports provide insights into test coverage, execution progress, and defects. This visibility helps stakeholders understand how well user-facing functionality is validated.

Supporting White Box Testing

White box testing focuses on validating internal code quality and logic. TestFiesta integrates with automation tools and CI/CD pipelines to support these efforts.

Automation Integration: TestFiesta connects with unit testing frameworks and code analysis tools, allowing teams to track automation results alongside manual tests. Unit tests run automatically; results flow into TestFiesta dashboards.

Defect Tracking and Metrics: When unit tests or code analysis uncover issues, TestFiesta captures them as defects, which can be tracked right inside TestFiesta or through a third-party platform like Jira. Development teams track fixes and correlate code quality improvements with testing efforts.

Conclusion

Black box testing and white box testing represent two different but complementary approaches to software quality assurance. Black box testing focuses on validating the application from the user’s perspective. White box testing examines the internal logic and structure of the code. Each method uncovers different types of defects, making them both valuable in a well-rounded testing strategy.

Rather than choosing one over the other, modern software teams benefit from using both approaches together.

By organizing test cases, tracking execution results, and integrating automated tests, tools like TestFiesta help teams manage both testing approaches more effectively. This unified view allows developers and QA teams to collaborate more efficiently and maintain high software quality throughout the development lifecycle.

FAQs

How does white box testing differ from black box testing?

White box testing and black box testing differ mainly in visibility into the software’s internal structure. In black box testing, testers evaluate the application by providing inputs and verifying outputs without looking at the underlying code. White box testing involves analyzing internal logic, structure, and code paths to ensure the software behaves correctly. Black box testing focuses on user-facing functionality; white box testing validates internal implementation.

Should I use black box testing or white box testing?

In most cases, you shouldn’t choose one over the other. Both approaches serve different purposes and are most effective when used together. Black box testing validates how the application behaves from a user’s perspective, whereas white box testing ensures the internal code works correctly. Combining both approaches gives teams more complete testing coverage.

Which testing type is best for my software?

There is no single “best” software testing type for all software projects. The right approach depends on factors like system complexity, development process, and risks involved. Most modern teams use a mix of testing methods, including black box, white box, and automated testing, to ensure both functionality and code quality are thoroughly validated.

How should I evaluate my needs and goals for an ideal software testing type?

Start by considering your product’s requirements, risk level, and development workflow. If validating user behavior and functionality is your focus, black box testing plays a larger role. If you need to verify internal logic, security, or performance at the code level, white box testing becomes more important. Many teams adopt a balanced strategy incorporating multiple testing techniques to achieve broader coverage.

Can I do both black box testing and white box testing at the same time?

Yes, and many teams do exactly that. Black box testing and white box testing can run in parallel during different development stages. Developers perform white box testing through unit tests and code analysis. Simultaneously, QA teams conduct black box tests to validate features and workflows. Running both simultaneously helps teams detect issues earlier and maintain higher software quality.

What is grey box testing?

Grey box testing is a hybrid approach combining elements of both black box and white box testing. Testers have partial knowledge of the system’s internal structure but still test the application from an external perspective. This allows testers to design more informed test cases while still focusing on real user scenarios.

What’s the difference between black box, white box, and grey box testing?

The main difference lies in how much knowledge the tester has about the software’s internal structure. Black box testing gives the tester no visibility into the code; the focus remains on inputs and outputs. White box testing gives testers full knowledge of internal code and validation of the program’s logic and structure. Grey box testing sits in between: testers have some understanding of system internals, but primarily test the application from a user-facing perspective.