Introduction

Individual components passing their tests is a good sign, but not enough. Modern software is rarely a single, self-contained thing. It’s a collection of modules, APIs, services, and third-party systems that all need to work together, and assuming they will, simply because each piece works in isolation, is one of the more expensive mistakes a team can make. That’s the problem system integration testing, or SIT, exists to solve.

SIT is the process of testing how different software modules or systems work together, verifying the interactions, data flow, and communication between integrated parts to ensure they function properly as a collective. It sits after unit testing and before user acceptance testing, the phase where the product gets treated as a complete system for the first time.

This guide covers everything testers need to know, what SIT actually involves, how it works, where it fits in the development lifecycle, and how to run it effectively.

What Is System Integration Testing (SIT): Meaning, Definition, and Goals

At its core, SIT is about one thing: making sure the pieces actually work together. System Integration Testing is the overall testing of a whole system composed of many sub-systems, with the main objective of ensuring that all software module dependencies are functioning properly and that data integrity is preserved between distinct modules. Instead of retesting individual components, SIT tests what happens when those components start talking to each other.

Where SIT Sits in the Testing Lifecycle

SIT has a prerequisite in which multiple underlying integrated systems have already undergone and passed system testing. SIT then tests the required interactions between these systems as a whole, and its deliverables are passed on to user acceptance testing. Think of it as the bridge between verifying that individual parts work and confirming that the complete system is ready for real users.

What SIT Is Actually Testing

SIT isn’t a single type of test; it covers several dimensions of how integrated systems behave:

- Interfaces and data flow: Does data move correctly between modules? Is anything getting lost, corrupted, or misrouted in transit?

- Functional dependencies: When one module triggers an action in another, does the right thing happen?

- Regression across integration points: As testing for dependencies between different components is a primary function of SIT, this area is often most subject to regression testing, confirming that recent changes haven’t broken existing connections.

- Security and reliability: By testing how different components communicate and share data, SIT can uncover hidden vulnerabilities and security risks, helping to ensure the system is not just functional but secure and reliable.

The Goals of SIT

The goals of SIT go beyond finding bugs. Done well, it serves several purposes at once:

- Confirming the system behaves as a unified whole, not just as a collection of individually passing components.

- Catching integration defects, data mismatches, broken interfaces, and unexpected dependencies before they reach production.

- Ensuring smooth business process changes, when companies update processes to meet new goals, those changes often affect multiple systems, and SIT helps make sure those updates are fully integrated and that everything still works correctly across all applications.

- Giving the team confidence that what’s being handed off to UAT is actually stable.

Who Is Involved in SIT?

SIT isn’t a one-person job. Test managers or test leads plan the scope and goals, determine the approach and schedule, and define roles and responsibilities. From there, testers execute the test cases, developers address the defects that surface, and system architects provide the technical context needed to understand how components are supposed to interact. It’s a collaborative process, and it works best when everyone understands what they’re responsible for before testing starts.

Why System Integration Testing Matters

Unit tests passing across the board are reassuring. But it doesn’t tell you what happens when those units start working together, and that gap is where some of the most damaging defects hide. Here’s why SIT deserves more attention than it typically gets.

It Catches the Bugs That Unit Testing Misses

Integration testing identifies defects that are difficult to detect during unit testing and reveals functionality gaps between different software components prior to system testing. Individual components can behave perfectly in isolation and still fail the moment they need to exchange data or trigger actions across a boundary. Those are the defects that SIT is specifically designed to surface, and they’re exactly the kind that tend to be expensive when they reach production.

It Validates How the System Behaves End-to-End

SIT validates the end-to-end functionality of the system, simulating real-world scenarios to uncover any integration-related bugs or defects. This is the first point in the testing lifecycle where the product gets evaluated as a complete, working system rather than a set of independent components, which means it’s also the first point where real user journeys can be properly tested.

It Protects Against the Ripple Effect of Updates

In the era of Agile and DevOps, software vendors roll out frequent updates. If systems are tightly integrated, unexpected problems may occur in one component when another component receives updates. SIT acts as a safety net against that ripple effect, catching regressions at integration points before they quietly break something that was working fine last sprint.

It Keeps Business Processes Intact

Software doesn’t exist in a vacuum; it supports real business workflows. When organizations change existing business processes to accommodate new requirements, those changes may have interdependencies on different modules and applications. SIT fills in these gaps and ensures that new requirements are incorporated into the system. Without it, a change that looks clean on paper can quietly break a workflow nobody thought to test.

It Reduces the Cost of Late Defects

The later a defect is found, the more it costs, in engineering time, in rework, and in the knock-on effect it has on everything downstream. By identifying and resolving potential issues early, SIT prevents costly failures later in the development or production stages. Catching an integration defect during SIT is a fraction of the cost of catching it after release, and significantly less damaging to user trust.

It Supports Agile and Continuous Delivery

SIT is an essential testing phase in agile development methodologies, helping to ensure that the system is tested comprehensively and meets the specified requirements. In a world where teams are shipping continuously, having a reliable integration testing process isn’t optional; it’s what makes fast delivery sustainable rather than reckless.

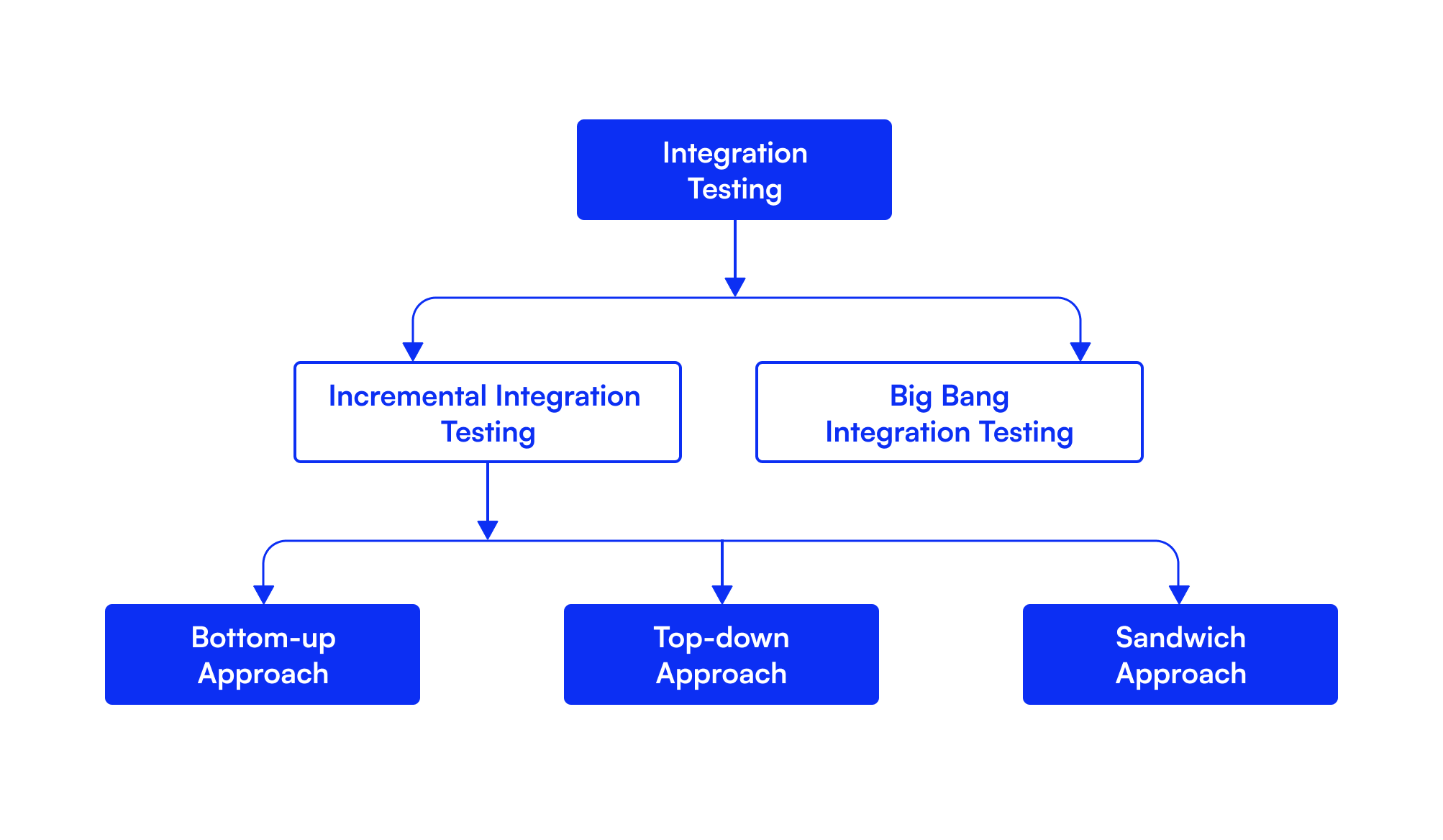

Different Techniques of System Integration Testing

There’s no single way to run SIT. The right approach depends on your system’s architecture, how far along development is, and what kind of risk you’re most concerned about. Integration testing strategies broadly fall into two categories: non-incremental and incremental. Non-incremental approaches involve integrating all components at once, which can simplify planning but increase the risk of integration failures. Incremental approaches build the system piece by piece, making it easier to isolate defects.

Here’s how each technique works in practice.

Incremental Testing

Incremental testing is the backbone of most modern SIT approaches. Rather than waiting until every module is ready before testing begins, two or more components that are logically related are tested as a unit, then additional components are combined and tested together, repeating until all necessary components are covered. The key advantage is fault isolation; when something breaks, you know exactly which integration introduced the problem. It’s slower than throwing everything together at once, but significantly less painful to debug.

Bottom-Up Integration Testing

Bottom-up integration testing starts with the lower-level modules, which are tested first and then used to facilitate the testing of higher-level modules. The process continues until all modules at the top level have been tested. This approach uses drivers, temporary programs that simulate higher-level modules not yet available, to keep testing moving without waiting for the full system to be built. It’s particularly well-suited to data-heavy applications and microservices architectures where the foundation needs to be rock solid before anything else is layered on top. The tradeoff is that high-level functionality, the parts users actually interact with, gets validated last.

Top-Down Integration Testing

Top-down is essentially the reverse. Testing begins with the highest-level modules and works down through lower-level components, using stubs to simulate the behaviour of modules not yet integrated. This means user-facing functionality gets tested early, which makes it easier to catch design and flow issues before they’re baked in. The downside is that lower-level modules, often where the most critical business logic lives, get less thorough coverage until late in the process, and writing stubs for every missing module adds overhead.

Sandwich (Hybrid) Testing

Sandwich testing, also known as hybrid integration testing, is used when neither top-down nor bottom-up testing works well on its own. It combines both approaches, allowing teams to start testing from either the main module or the submodules, depending on what makes the most sense, instead of following a strict sequence. It uses both stubs and drivers, allows parallel testing across layers, and is particularly well-suited to large, complex systems. The tradeoff is cost and complexity; it takes more planning and more resources to run effectively, and it’s overkill for smaller projects.

Big Bang Integration Testing

Big bang is the simplest approach on paper and the riskiest in practice. All components or modules are integrated together at once and tested as a single unit, which means if any component isn’t complete, the entire integration process can’t execute. When it works, it works quickly and gives an immediate overview of system behaviour. When it doesn’t, it can’t reveal which individual parts are failing to work in unison, making debugging significantly harder. It’s best suited to small, simple systems where the complexity of incremental testing isn’t justified. For anything larger, the time saved upfront tends to get paid back with interest when defects surface.

The Role of QA in SIT

SIT is a team effort, but QA sits at the centre of it. While developers, architects, and business analysts all play a part, it’s the QA team that owns the process, from planning through to sign-off.

QA engineers create the detailed test cases and execute SIT, verifying that integrated components function correctly. System architects and developers work closely with QA to understand integration requirements and designs and support the creation of the testing environment. Business analysts collaborate with the QA team to ensure the integrated system aligns with business requirements and actively participate in reviewing and validating test cases.

In practice, that means QA is responsible for a lot more than just running tests. QA engineers develop and execute integration test cases, document defects correctly, and guide developers on fixes to make sure everything is resolved on time. They’re also the ones who decide when the system is stable enough to move forward, which makes their judgment and their test results critical to the process.

The broader point is this: quality in SIT isn’t the QA team’s responsibility alone, but without a strong QA function anchoring the process, integration defects have a reliable way of making it further than they should.

Entry and Exit Criteria for System Integration Testing

Before SIT begins and before it ends, there needs to be a clear agreement on what “ready” actually means. Entry and exit criteria are what provide that clarity; they define the conditions that must be met before testing starts and the conditions that must be satisfied before the team can move on. Without them, integration bugs have a reliable way of slipping through unnoticed.

Entry Criteria - Before SIT begins:

- All individual components have completed unit testing successfully

- The integration test environment is set up and available

- Test data is prepared and sufficient to simulate real-world scenarios

- The integration test plan and test cases have been reviewed and approved

- Software requirements, design documents, and integration specs are available

- All priority bugs from unit testing have been resolved

- Roles and responsibilities across the testing team are clearly defined

Exit Criteria - Before SIT Is Signed Off:

- All planned SIT test cases have been executed

- All critical and high-priority defects have been fixed and closed

- Test coverage meets the agreed threshold across all integration points

- All test results, defects, and documentation have been updated and signed off on

- Stakeholders have reviewed and approved the integration test results

- The system is stable and ready to progress to system or acceptance testing

Treating these criteria as a formality or skipping them under deadline pressure is one of the more reliable ways to end up back at square one after something breaks in production.

Primary Benefits of SIT Testing

SIT is one of those phases that doesn’t always get the credit it deserves, until something goes wrong without it. Here’s what it actually delivers when done well:

- Early detection of integration defects: Issues at component boundaries get caught before they compound. A data mismatch or broken API call found during SIT is a fraction of the cost of the same defect found in production.

- End-to-end validation: SIT is the first point in the testing lifecycle where the system gets evaluated as a whole. It confirms that real user journeys work correctly across all integrated components, not just in isolation.

- Reduced risk at release: By the time a system passes SIT, the team has evidence that it holds together under realistic conditions. That’s a meaningfully different level of confidence than unit tests alone provide.

- Protection against regression: When updates are made to one component, SIT catches the unintended knock-on effects before they silently break something else that was working fine.

- Better collaboration between teams: Running SIT forces developers, QA, and architects to align on how components are supposed to interact. That shared understanding tends to surface assumptions and miscommunications that would otherwise only become visible at the worst possible time.

- Supports compliance and auditability: For teams in regulated industries, SIT provides a documented record of how integrated systems were tested and what was verified, which matters when audits happen.

- Smoother handoff to UAT: A system that has passed SIT is cleaner, more stable, and better documented. That makes User Acceptance Testing faster and more focused on real user feedback rather than catching defects that should have been found earlier.

Common Challenges in SIT Testing

SIT is one of the more complex phases in the testing lifecycle, and not just technically. Here’s where teams most commonly run into trouble:

- Integration complexity: Different systems may use different data formats, structures, or naming styles, which causes issues when data moves between them. The more systems involved, the more combinations there are for things to go wrong.

- Managing dependencies: When one module isn’t ready, it holds up everything connected to it. Delays or bugs in one system can cause cascading issues throughout the integration, making it hard to keep testing on schedule.

- Incomplete or unstable modules: One module may be incomplete or unstable, requiring stubs and drivers to simulate missing components and reduce testing delays. This adds overhead and introduces its own risk if the simulated behaviour doesn't accurately reflect the real thing.

- Test environment complexity: Setting up and maintaining a consistent integration test environment is harder than it sounds. Configuration drift, when an environment gradually strays from its intended setup, can produce inaccurate results and make defects harder to trace.

- Difficulty isolating failures: When multiple systems interact, it’s hard to trace failures back to their root cause. Without proper logging and monitoring in place, debugging integration defects becomes a time-consuming process of elimination.

- Legacy system compatibility: Older systems built on outdated technologies often resist clean integration with modern applications. Mismatched data formats, deprecated APIs, and a lack of vendor support all add friction that newer systems don’t carry.

- Keeping up with Agile and DevOps pace: Frequent updates in Agile and DevOps environments can cause issues in integrated systems. End-to-end regression testing is necessary but time-consuming and often inadequate when done manually.

- Test coverage gaps: Creating test cases that cover all possible interactions and edge cases between integrated systems can be time-consuming and complex, and it’s easy to miss scenarios that only surface under specific conditions or at scale.

Best Practices for SIT

SIT is only as effective as the process behind it. Having the right techniques in place is one thing; executing them in a structured, disciplined way is what actually determines whether integration defects get caught before they cause problems. Here are the practices that make the biggest difference.

Set Well-Defined Objectives

Before a single test gets written, the team needs to agree on what SIT is actually trying to achieve. Clear goals help focus testing efforts, ensure comprehensive coverage, and facilitate early detection of integration issues. Without them, testing becomes broad and unfocused, teams end up covering some areas twice and missing others entirely. Define the scope, the integration points being tested, and what a successful outcome looks like before anything else.

Identify and Document Test Cases

Develop detailed test cases covering both positive and negative scenarios. This ensures all possible interactions and edge cases between integrated systems are validated thoroughly. Every test case should include the input data, expected outcome, and any dependencies. Maintaining all test assets, such as test scripts and results,s in a centralised location means all teams can easily access them, which matters more than it sounds when multiple teams are working across the same integration points simultaneously.

Create Accurate Test Data

Test data quality directly affects the reliability of SIT results. Specific expectations generate good test data, and this also positions you to automate basic regression tests and drive test harnesses. Test data should mirror real-world usage as closely as possible, covering typical scenarios as well as edge cases. Vague or generic test data produces vague results and makes it much harder to reproduce defects when they surface.

Implement Test Automation

Manual testing alone can’t keep up with the pace and volume that SIT demands. Automated testing can quickly execute test cases, while manual testing covers aspects of the integration that may be difficult to automate; combining both ensures that all aspects of the integration are thoroughly tested. Automation is particularly valuable at integration points that are touched frequently, where running tests manually after every change simply isn’t sustainable.

Track and Analyze System Performance

Functional correctness is only part of the picture. During testing, continuously track performance metrics to identify bottlenecks or degradation points caused by integration. A system can pass every functional test and still fall apart under load, slow response times, memory leaks, and throughput issues often only emerge when components are working together under realistic conditions. Catching these during SIT is significantly cheaper than catching them in production.

Record and Report Results

Keep detailed records of all executed tests, encountered defects, and resolutions. Well-documented results support transparency, assist debugging, and provide traceability for compliance and audits. Good documentation also protects the team when questions arise later about what was tested, what was found, and what was done about it. A result that isn’t recorded might as well not exist.

Re-Test After Fixes and Updates

Fixing a defect doesn’t mean the problem is fully solved, or that the fix didn’t introduce something new. After making changes, re-run relevant tests to confirm the fix works and nothing else broke. Continuous re-testing keeps the system stable as things change. Without it, a passing SIT can give a false sense of confidence.

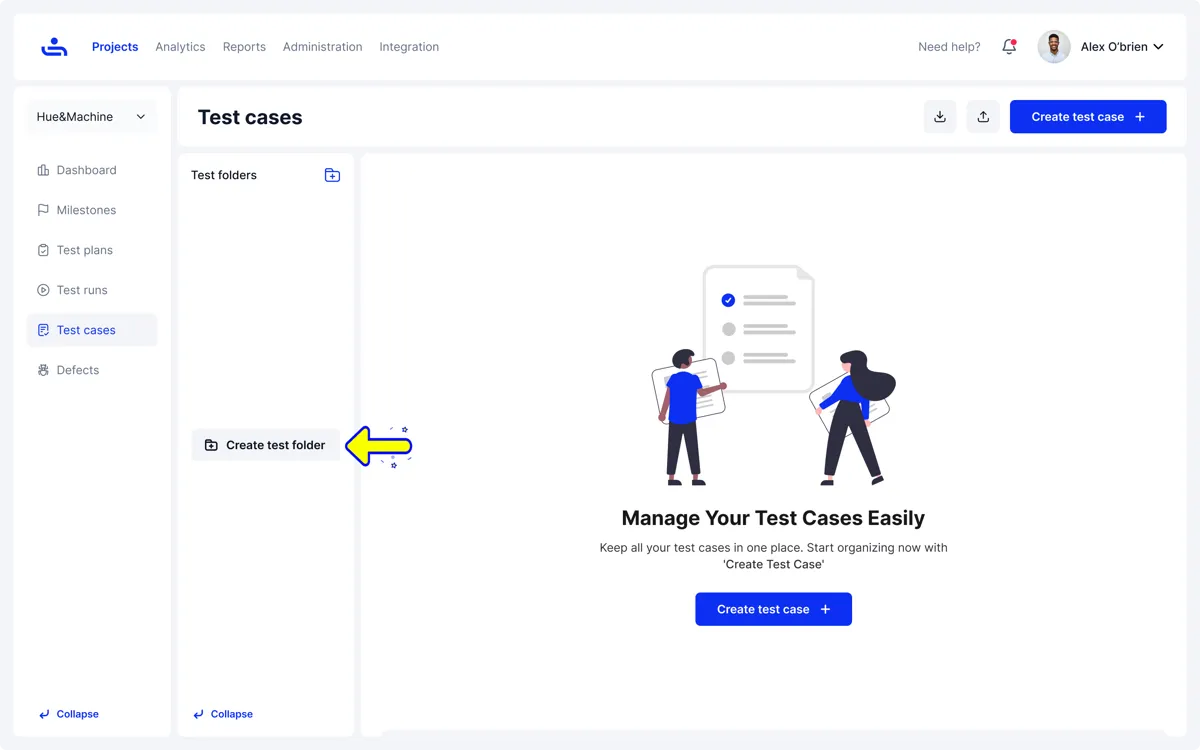

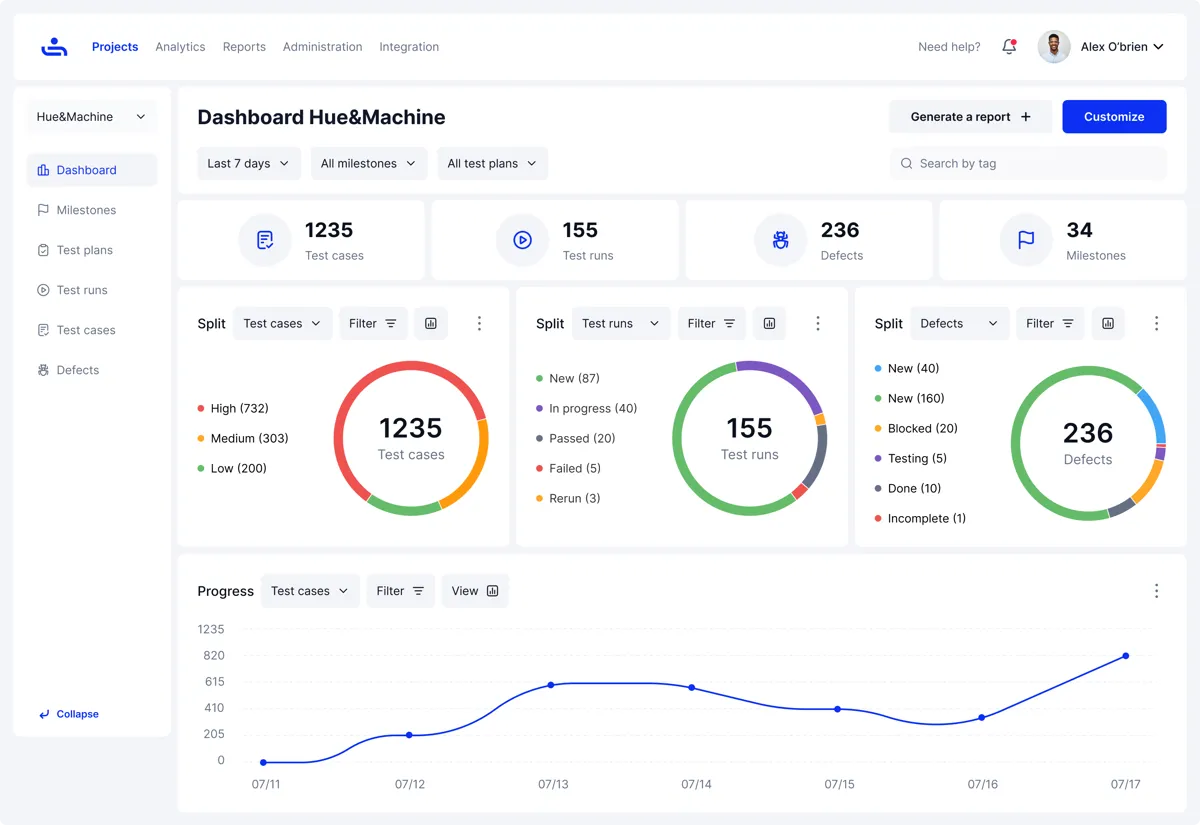

System Integration Testing (SIT) With TestFiesta

SIT doesn’t exist in isolation; it sits inside a broader testing pyramid that spans unit testing, integration testing, system testing, and UAT. The challenge for most teams isn’t understanding that pyramid, it’s having the tools to support it end-to-end without stitching together multiple platforms to do it.

TestFiesta is built to support the full testing lifecycle, not just one phase of it. Test cases can be organized and executed across every level of the pyramid, from early unit and integration tests through to full system and acceptance testing, in one place.

For SIT specifically, that means the teams running integration tests are working in the same environment as the teams above and below them in the pyramid, keeping coverage visible and handoffs clean.

Managing SIT Without the Overhead

TestFiesta makes it straightforward to create and maintain test cases mapped directly to integration points, structured by feature, module, or risk level. Native defect tracking means issues get logged, assigned, and resolved without switching tools, keeping the feedback loop tight across what is often a highly collaborative, multi-team process. And when it comes to knowing whether the system is ready to move forward, the reporting gives a clear, evidence-based picture of coverage and defect status across all integration points, no manual dashboard updates required.

Native Jira and GitHub integrations mean defects flow directly into the development workflow without manual handoffs. Less friction, better visibility, and one less reason for things to fall through the cracks during one of the more complex phases in the testing lifecycle.

Conclusion

System integration testing is the phase where the real picture of software quality emerges. Unit tests tell you that individual components work. SIT tells you whether the system works, and that’s a meaningfully different question.

The teams that treat SIT as a formality tend to find out why it matters at the worst possible time. The ones that invest in it properly, clear entry and exit criteria, well-documented test cases, the right techniques for their architecture, and tooling that keeps everything connected, ship with a level of confidence that unit testing alone simply can’t provide.

The core takeaways are straightforward: start SIT with defined objectives and don’t skip the entry criteria, choose an integration technique that matches your system’s complexity, catch defects at integration points before they compound downstream, and make sure the entire process is documented well enough to stand up to scrutiny.

TestFiesta supports this process end-to-end, bringing test management, defect tracking, and reporting into one place so nothing falls through the cracks.

FAQs

What is SIT in testing?

System integration testing is the process of verifying that different software modules and systems work correctly together. It focuses on the interactions, data flow, and communication between integrated components, not on whether individual parts work in isolation, but on whether they work as a whole.

Who performs SIT testing?

SIT is primarily carried out by QA engineers, often working closely with developers and system architects. QA owns the test planning and execution, developers address defects as they surface, and architects provide the technical context needed to understand how components are supposed to interact.

Why do teams need to conduct SIT testing?

Because unit tests only confirm that individual components work, they can’t tell you what happens when those components start talking to each other. SIT is what catches data mismatches, broken interfaces, and unexpected dependencies before they reach production, where they’re significantly more expensive to fix.

What are the limitations of SIT?

SIT can be time-consuming and resource-intensive, especially for complex systems with many integration points. Setting up and maintaining a stable test environment is harder than it sounds, and when failures occur across multiple interacting components, tracing them back to their root cause isn’t always straightforward. It also relies on modules being reasonably stable before testing begins; unstable components slow the entire process down.

What is the difference between Integration Testing and System Integration Testing?

Integration testing focuses on testing the interfaces between interconnected modules, while system testing checks the application as a whole for compliance with both functional and non-functional requirements. In short, integration testing is about verifying that components connect correctly, while SIT takes a broader view, validating that the entire integrated system behaves as expected end-to-end.

Is SIT a black box testing technique?

Mostly, yes. SIT is predominantly conducted using black-box testing techniques; testers interact with the system through its interfaces without needing to know what’s happening in the underlying code. That said, some knowledge of system architecture is often useful for designing effective test cases, particularly when tracing failures across integration points.

_%20A%20Guide%20for%20Testers%20-%20Main%20Image.png)