Introduction

Every software team reaches that nerve-wracking moment before launch: Is this actually ready? Alpha and beta testing exist to answer that question before real users experience the product. They’re often mentioned together, sometimes used interchangeably, and frequently misunderstood.

They’re not the same thing, and the distinction matters. One is about catching critical problems in a controlled setting before anyone outside the team sees the product. The other is about finding out what happens when the product meets reality, different devices, different use cases, and different people. Skipping one or confusing the two creates gaps that tend to surface at the worst possible time, after release, in front of users.

What Is Alpha Testing

Alpha testing is the first formal round of testing a software product goes through before it reaches anyone outside the organization. It’s internal, controlled, and intentionally rigorous. The goal is to surface as many bugs, gaps, and usability issues as possible while fixes are still cheap and fast to make.

It's carried out by QA teams, developers, and sometimes internal stakeholders who put the product through its paces in a staging environment designed to simulate real-world conditions without exposing real users to something that isn’t ready yet.

Interestingly, the term “alpha testing” actually originated at IBM, where internal verification tests were labeled A, B, and C, with the “A” test being verified before any public announcement. The terminology stuck, spread across the industry, and has been standard ever since.

What Alpha Testing Does

The purpose of alpha testing isn’t just to find bugs; it is to validate that the product actually works the way it is supposed to before it moves any further. That means checking core functionality, assessing usability, and confirming the software is stable enough to hand off to a wider audience. Anything critical that slips through here will eventually land in front of a real user, which is a much more expensive problem to fix.

How It Works: Two Phases, Two Perspectives

Alpha testing doesn’t happen in a single pass. It runs in two phases.

In the first, developers conduct white-box testing, examining the internal logic, code, and architecture to make sure everything functions correctly at a structural level.

In the second phase, the QA team takes over with black-box testing, evaluating the software purely from a user’s perspective without concern for what’s happening under the hood.

This two-phase approach matters because it covers both ends of the problem. White-box testing catches issues in the code that a user would never think to look for. Black-box testing catches issues a user would run into immediately, broken flows, confusing UI, and missing validations. You need both.

Types of Alpha Testing

Within this process, two core testing types are at play. White-box alpha testing goes deep into the code, checking every logical branch, statement, and condition to make sure the internal mechanics are sound. Black-box alpha testing ignores the internals entirely and focuses on whether the software behaves correctly from the outside, given a certain input.

It's also worth noting that alpha test data sets typically use synthetic rather than real data, and are kept relatively small to make debugging and root cause analysis more manageable. This keeps the environment tightly controlled and makes it easier to trace issues back to their source.

What Are the Benefits of Alpha Testing?

Alpha testing isn’t just a box to tick before moving to beta. Done well, it’s one of the highest-leverage activities in the entire development cycle, the last internal checkpoint before the outside world gets involved. Here’s why it’s worth taking seriously:

Catching Problems While They’re Still Cheap to Fix

The further a bug gets in the development process, the more expensive it is to fix. A defect caught during alpha testing might take an hour to resolve, but the same issue after release can lead to hotfixes, rollbacks, support tickets, and even reputational damage. Alpha testing shortens the feedback loop, issues are found and fixed early, in a controlled environment, before those costs start to build up.

Validating Product Functionality

There’s a difference between individual features passing their unit tests and the entire product working the way a real user would expect it to. Alpha testing looks at the software as a whole, checking that core functionality holds up end-to-end, that integrations work together, and that nothing falls apart when you start combining features the way real users will. It’s the first time the product gets treated like a product rather than a collection of components. In the testing pyramid, it sits at the top as an end-to-end test.

Identifying Usability Issues Before Real Users Do

Because alpha testing is performed from an end-user perspective, it helps uncover usability gaps, including issues that have nothing to do with the functionality built in that specific release. A feature can work exactly as specified and still be confusing, slow, or frustrating to use. Alpha testing is where that kind of feedback surfaces, when there’s still time to act on it without disrupting a live product.

Giving the Team Confidence Before Moving Forward

There’s a meaningful difference between assuming a product is ready and actually having evidence that it is. Alpha testing builds confidence across the team, aligning expectations between stakeholders, designers, and developers before the product moves into a wider testing phase. That alignment matters. It means everyone is working from the same understanding of what’s been verified and what still needs attention.

It Reduces the Burden on Beta Testing

Beta testing is most valuable when it’s focused on real-world feedback, not on catching critical bugs that should have been found earlier. The cleaner the product is going into beta, the more useful the feedback coming out of it. Alpha testing is what makes that possible. A thorough alpha phase delivers a more robust and user-friendly product and reduces the pressure on the beta testing phase to do work it was never designed for.

Stress-Testing Performance, Not Just Functionality

Alpha testing isn’t limited to checking whether features work. Load testing is also performed during alpha testing to understand how the software handles heavy usage before real users put it under pressure. Performance issues found at this stage are far easier to diagnose and fix in a controlled environment than they are once the product is live with multiple variables.

Limitations of Alpha Testing

Alpha testing is valuable, but it isn’t perfect. Understanding where it falls short is just as important as knowing what it does well, because the gaps it leaves are exactly what beta testing is designed to fill.

It Can’t Fully Replicate the Real World

This is the fundamental constraint of alpha testing. Because it’s done in a controlled, internal environment, it lacks the variety of user scenarios that exist in the real world. No matter how well a staging environment is configured, it’s still a simulation. The unpredictability of real users, their devices, networks, habits, and edge cases simply can’t be replicated in-house with any real accuracy.

Internal Testers Carry Inherent Bias

The people running alpha tests have usually spent months building the product. They know how it works, they know what to click, and they know what to avoid. That familiarity makes it almost unavoidable to develop a bias towards the application; both developers and testers already know how it works, which means they’re less likely to stumble across issues the way a new user would. Blind spots are a natural byproduct of proximity.

It’s Time-Consuming and Resource-Heavy

Alpha testing is thorough by design, but thorough takes time. The complete product gets tested at a high level and in-depth using different black-box and white-box techniques, which means the test execution cycle can drag on, especially if the product has many features or uncovers a significant number of defects. For teams already under deadline pressure, this is a real constraint that requires careful planning to manage.

It Doesn’t Cover Every Configuration

Alpha testing may not cover all the hardware and software configurations that end users actually have. A product can pass every internal test and still break on a specific browser version, operating system, or device that nobody on the team happened to test on. That kind of coverage gap is only really closed when real users, with their own setups, get involved.

Some Defects Simply Won’t Surface Here

Alpha testing focuses on finding major bugs, but it may not fully address performance and usability issues that only show up under heavy user loads or varied environments. Certain problems are invisible at a small scale and only emerge when the product is under real-world pressure. That’s not a failure of the process; it's just the nature of controlled testing, and it’s why beta testing exists.

What Is Beta Testing?

If alpha testing is about getting your own house in order, beta testing is about finding out whether the house actually works for the people who are going to live in it. It’s the stage where real users test a nearly finished software product in a production environment before its official release, the final checkpoint to uncover bugs, validate usability, and confirm the product is ready for market.

The shift from alpha to beta is significant. You’re no longer in a controlled internal environment with a team that knows the product inside out. Beta testing involves real end users testing the product in a real-world environment, outsourcing the testing process to external users who bring entirely different devices, habits, and expectations to the table. That diversity is exactly the point.

What Beta Testing Does:

The core goal of beta testing is straightforward: catch what alpha testing missed. But beta testing serves a broader purpose than just bug hunting. It’s also an opportunity to validate hypotheses about how users will actually interact with new functionality, and to refine positioning, messaging, and communication about the product, tested against people who are now genuinely using it. For many teams, it doubles as early market validation.

Types of Beta Testing

Not every beta test looks the same, and choosing the right format matters.

The two most common types are open and closed beta testing.

In an open beta, a large number of testers, sometimes the general public, put the product through its paces before final release. In a closed beta, testing is limited to a specific set of users, which may include current customers, early adopters, or paid testers.

Beyond those two, there are more targeted approaches. Focused beta testing zeroes in on a specific feature or component rather than the product as a whole. Technical beta testing brings in the organization’s employees or technically proficient users to evaluate the product and feed observations directly back to the development team. Some teams also run post-release beta testing, a subset of live users who continue testing after launch, feeding feedback into subsequent releases.

Benefits of Beta Testing

Beta testing is where the controlled assumptions of internal testing meet the messiness of the real world. The benefits aren’t just about finding more bugs; they run deeper than that.

Catching What Internal Testing Can’t

No matter how thorough alpha testing is, it has a ceiling. QA often tests pieces of software, major components, and workflows, but the overall use of the software, incorporating all components, user experience, and performance, is frequently left out. Beta testing covers those gaps. Real users interact with the product in ways no internal team would think to script, and that unpredictability is exactly what makes beta testing valuable.

Validating Features in the Real World

There's a meaningful difference between a feature working in a staging environment and a feature working in the wild. Beta testing happens in the real world, delivering results that simply won't occur in a test environment. It’s a true test of whether features work as they should. That distinction matters more than most teams acknowledge until something breaks post-launch.

Surfacing Usability Issues That Specs Never Anticipated

A product can be built exactly to specification and still feel frustrating to use. Beta testing primarily focuses on understanding and improving the full end-user experience. Beta testers investigate the experience flow and report back on any pain points that hinder enjoyment, some of which may be subjective but collectively yield results that impact customer conversions and brand reputation. That kind of feedback is impossible to generate internally.

Reducing the Cost of Post-Launch Fixes

Fixing issues before a full release ensures smoother adoption for users, and fixing problems during beta testing is far more cost-effective than addressing them after a full launch. The closer to production a bug gets, the more expensive it becomes, in engineering time, in support load, and in user trust.

Driving Smarter Product Decisions

Beta testing allows teams to take a data-driven approach to feature development and avoid putting significant time and effort into features that yield low engagement. That’s not a minor benefit, it's the difference between shipping things users actually want and shipping things that make sense on a roadmap.

Building Early Momentum

Beta testing generates early market interest and visibility, which enhances product adoption rates. The users who participate in a beta aren’t just testers; they’re early advocates. When they feel heard and see their feedback reflected in the final product, that relationship carries forward into launch and beyond.

Stress-Testing the Product at Scale

Beta testing engages real users in real-world environments, unlocking feedback that helps identify issues, refine the product, and maximize ROI, while also helping businesses mitigate financial risks and optimize their launch strategy. No internal load test replicates what happens when actual users, on actual devices, hit a product all at once.

Limitations of Beta Testing

Beta testing is the closest thing to a real-world rehearsal before launch, but it isn't without its own set of problems. Knowing where it falls short helps teams plan around the gaps rather than get blindsided by them.

You Can’t Control the Testing Environment

This is the trade-off at the heart of beta testing. The real-world diversity that makes it valuable also makes it unpredictable. The testing environment is not under the control of the development team, and bugs are often hard to reproduce because the conditions differ from one user to the next. A defect that one tester can reproduce consistently might be completely invisible on another device or network setup, which makes diagnosing and fixing it significantly harder.

Feedback Quality Is Inconsistent

Beta testers aren’t trained QA engineers. Some will file detailed, actionable bug reports. Others will send a one-line message saying something "feels off." Bug reporting from beta testers is frequently not systematic, and duplicate reports are common, which means the team ends up spending time sorting through noise rather than acting on signal. The value of beta feedback depends heavily on how well the process is structured and how clearly testers are guided.

It Doesn’t Guarantee Full Coverage

Beta testing may not cover all possible scenarios and user environments; certain issues might still go unnoticed until a wider audience starts using the product. Feedback from a small group may not reflect the broader user population's views and needs, and some bugs only appear when the product is used at a much larger scale post-launch. A successful beta is encouraging, but it isn’t a guarantee.

It Takes Significant Time and Resources to Manage

Running a beta program properly isn’t lightweight. It requires tools to collect and make sense of feedback, ongoing effort to manage it, and constant recruitment as testers drop off over time. Teams that underestimate this often end up with a beta program that generates feedback no one has time to use.

Poor Outcomes Can Create Negative Publicity

If testers face significant issues or the product falls short of expectations, there is a possibility of negative publicity. Beta testers talk. They post on forums, share experiences on social media, and form opinions that stick. Releasing a beta before the product is stable can generate bad press before you’ve even launched.

It Can Delay the Final Release

Addressing feedback from beta testing may delay the final release, especially if significant changes are needed. That’s not inherently a bad thing; shipping something broken is worse than shipping it late, but teams need to build realistic timelines that account for the possibility of meaningful rework coming out of beta, not just minor polish.

Difference Between Alpha and Beta Testing

Both phases serve the same ultimate goal, shipping software that works, but they differ in almost every other way. Here’s a side-by-side breakdown of the key distinctions:

The simplest way to think about it: alpha testing is about building confidence internally, and beta testing is about validating that confidence externally. You need both, and you need them in the right order.

Alpha and Beta Testing With TestFiesta

Running alpha and beta testing well isn’t just about having the right process; it’s about having the right tools to support it.

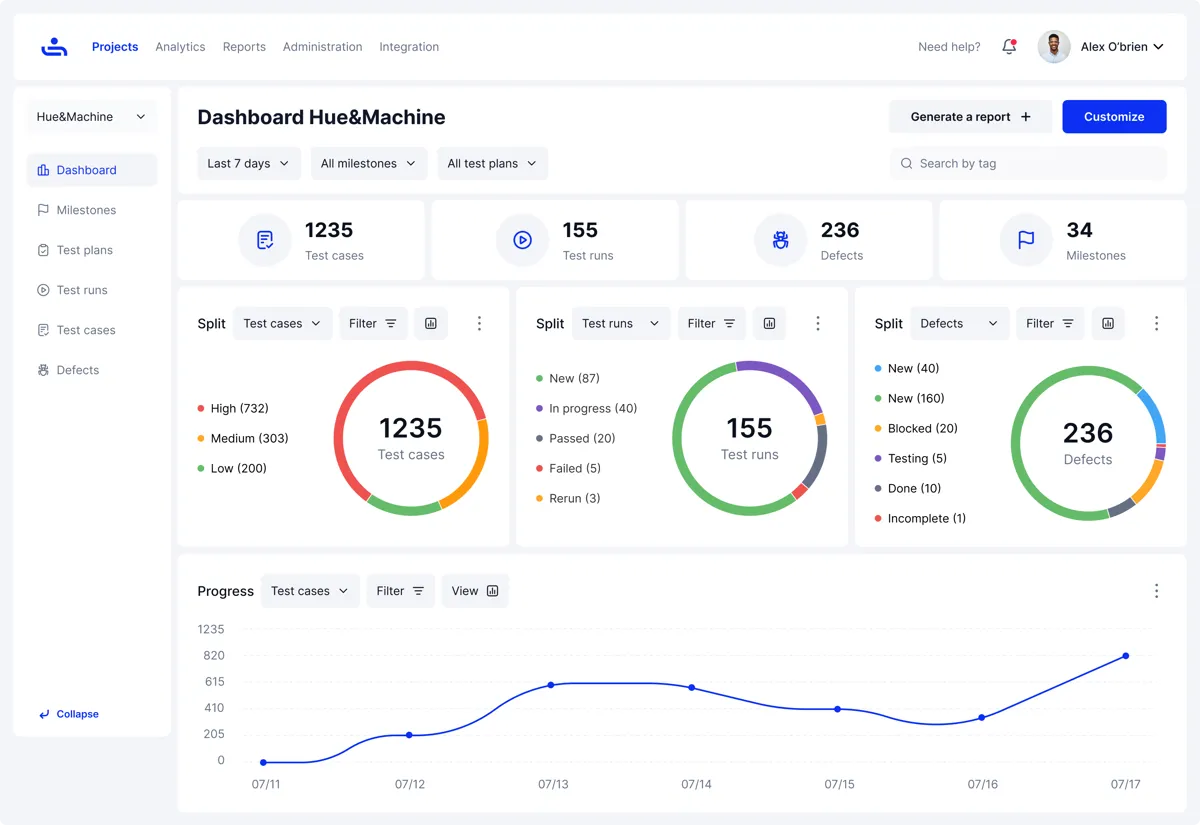

TestFiesta is built to make both phases easier to manage, track, and learn from without adding unnecessary overhead.

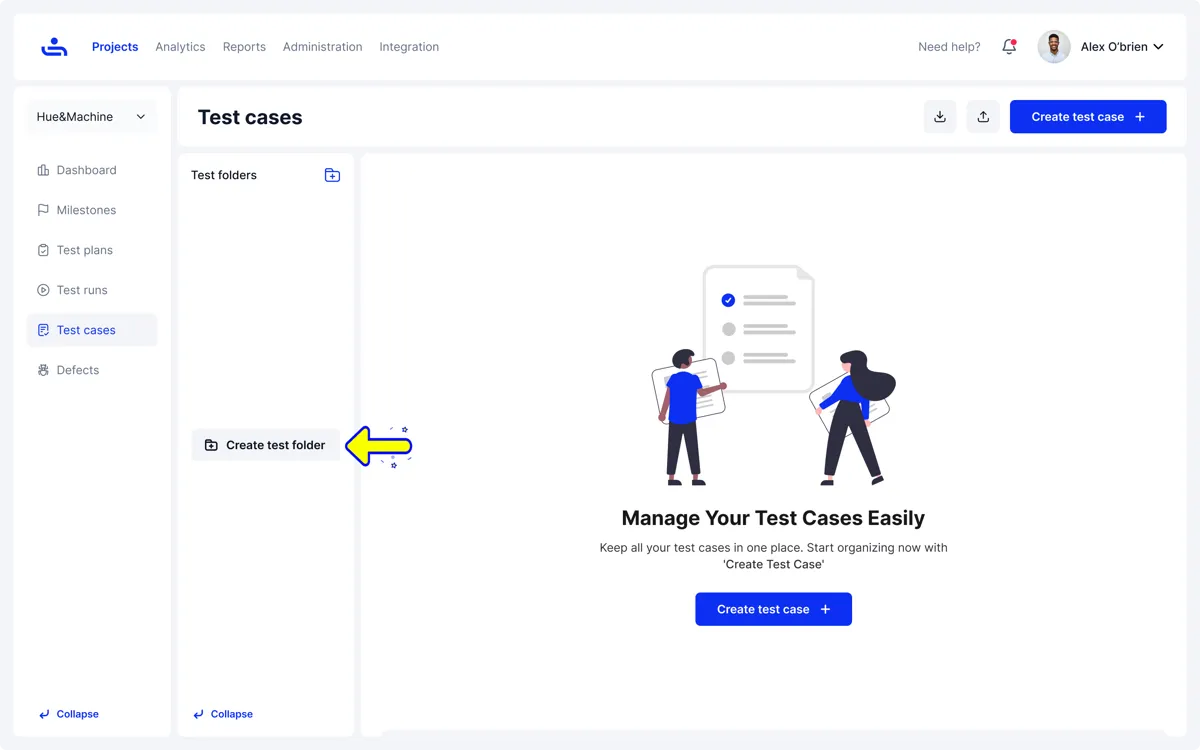

Test cases are easy to create, organise, and maintain, structured by feature, risk, or sprint, and the AI Copilot generates them directly from requirements, so teams spend less time on setup and more time on actual testing.

When defects get found, they’re logged in the same place where testing is happening, no tool switching required. And when it comes to knowing whether you’re ready to move forward, the reporting gives a clear picture of coverage, pass rates, and where the risk sits, so that call is based on evidence, not instinct.

On the integration side, TestFiesta connects natively with Jira and GitHub, so defects flow straight into the development workflow without manual handoffs. Whether you’re in a tightly controlled alpha phase or managing feedback from external beta testers, TestFiesta keeps everything connected and in one place.

Conclusion

Alpha and beta testing aren’t interchangeable; they serve different purposes, involve different people, and catch different kinds of problems. Alpha keeps things controlled and internal, making sure the product is stable and functional before anyone outside the team sees it. Beta takes it into the real world, validating that it actually holds up when real users get their hands on it.

Skipping either phase, or treating them as formalities, is how preventable issues make it to production. The teams that get the most out of both are the ones who treat them as distinct, deliberate checkpoints, not boxes to tick on the way to launch.

Used well and supported by the right tool, alpha and beta testing are what separate a confident release from a hopeful one.

FAQs

How does alpha testing differ from beta testing?

Alpha testing is internal, done by your own team in a controlled environment before the product is ready for outside eyes. Beta testing is external, done by real users in the real world once the product is stable enough to share. Alpha focuses on finding functional bugs and stability issues. Beta focuses on validating the experience, catching edge cases, and confirming the product holds up under real-world conditions.

Should I use alpha testing or beta testing?

Both, ideally. They’re not competing approaches; they’re sequential ones. Alpha comes first to make sure the product is solid enough for external testing. Beta comes after to validate it against real users. Choosing one over the other isn’t really a choice; skipping alpha means sending a potentially unstable product to real users, and skipping beta means shipping without any real-world validation.

Which testing type is best for my software?

It depends on where you are in the development cycle. If the product is still being actively built and hasn’t been tested end-to-end, alpha testing is where you start. If it’s near-final and you need to know how real users will respond to it, beta is the right move. For most software, the answer isn’t one or the other; it’s both, in the right order, with clear goals for each phase.

How should I evaluate my needs and goals for an ideal software testing type?

Start with what you’re building and what’s at risk. Then define your goal, catching bugs early, improving user experience, or reducing release risk. From there, choose a mix of testing types that support those goals. There’s no single right approach; it’s about what fits your product and how your team works.

Can I do both alpha testing and beta testing at the same time?

Not effectively. They’re designed to run in sequence for good reason; beta testing assumes the product has already been through an internal review. Running both simultaneously means exposing real users to a product that hasn’t been properly stabilised yet, which defeats the purpose of beta testing and risks creating a poor first impression with the people whose feedback you need most. Finish alpha, act on what it surfaces, then move into beta with a product that’s actually ready for it.