Introduction

Verification asks: Are we building the product right?

Validation asks: Are we building the right product?

Most QA teams use these terms interchangeably. The distinction matters because a product can pass verification perfectly and still fail validation. If the requirements were wrong from the start, flawless implementation delivers the wrong software.

Verification catches gaps between the spec and the build. A static code review, a requirements traceability matrix, and a test plan audit. You're checking whether what's been built matches what was specified, without executing a single line of code.

Validation catches gaps between the spec and reality. User acceptance testing, end-to-end scenarios, and beta releases in production conditions. You're confirming the software does what users actually need.

Both matter. Neither substitutes for the other.

What Verification and Validation Mean in Software Testing

Verification covers all static activities: reviewing requirement documents for ambiguity, inspecting test cases before execution, peer reviewing code, and checking that test coverage maps back to stated requirements. The goal is catching issues early, before they become expensive fixes in a running system.

Validation covers dynamic activities: running the software and confirming it behaves as users expect. Functional tests, integration tests, system tests, and UAT. You're not just checking that code matches the spec. You're checking that the whole system makes sense to the person using it.

Verification is internal-facing. The team checks its work against its own definitions.

Validation is external-facing. The software measures up against real users and real use cases.

In typical QA workflows, verification happens earlier, and validation happens later. But they overlap constantly. You might validate the last sprint's feature while verifying requirements for the next one. Neither is a one-time gate. Both run continuously throughout the project.

Verification: Building It Right

Verification evaluates work products, not running software. Requirements documents, design specs, code, test assets. You're confirming they're correct, complete, and consistent before anyone builds on them.

The defining characteristic: it's static. Nothing executes.

Core Verification Activities

Requirements reviews catch ambiguity, missing acceptance criteria, and untestable statements before anyone codes against them. A requirement ambiguity caught in review costs an hour of conversation. The same ambiguity caught in UAT costs a sprint of rework and a round of regression testing.

Design inspections validate that architecture decisions align with specifications. No design drift, no lost requirements in translation.

Code reviews surface logic errors, standards violations, and deviations from design before builds reach test environments. Two sets of eyes on every change.

Test case reviews confirm that test coverage actually maps to requirements and that two different testers would execute the same case identically.

Traceability audits verify every requirement has corresponding test coverage and nothing slips through.

When Verification Happens

Verification runs throughout the entire SDLC. Every phase produces outputs that should be checked before the next phase builds on them.

Requirements phase: Reviews, walkthroughs, and inspections catch contradictions, ambiguity, and missing acceptance criteria. This is where verification has maximum leverage.

Design phase: Architecture documents get reviewed against requirements to ensure nothing was lost or distorted.

Development phase: Code reviews and static analysis happen continuously. Developers and QA verify implementation aligns with design before code hits test environments.

Test planning phase: Test plans, cases, and scripts are reviewed before execution. Verifying test assets before running them ensures that validation tests the right things.

Pre-release phase: Traceability audits confirm every requirement has test coverage before final validation.

Verification isn't a phase. It's a habit at every handoff point. Skipping those checks doesn't save time. It moves the cost of fixing problems to the most expensive part of the cycle.

Validation: Building the Right Thing

Validation evaluates running software against the end user's needs. The only way to answer "did we build the right thing" is to execute the system and observe what it does.

The defining characteristic: it's dynamic. The system must run.

Core Validation Activities

Functional testing confirms each feature behaves as users expect, not just as the spec describes.

System testing exercises the fully integrated system under realistic conditions to verify that all components work together correctly.

User acceptance testing (UAT) puts software in front of actual users or stakeholders. Real people confirm it meets real-world needs before release.

End-to-end testing walks through complete user journeys to validate that the system holds together from start to finish, not just feature by feature.

Beta testing releases to a limited real-world audience to surface issues that controlled test environments didn't catch.

Validation catches emergent behavior: problems that only appear when real users interact with a real system in ways the team didn't anticipate. Requirements can be perfectly verified and still fail to account for how people actually use software. Validation exposes that gap.

When Validation Happens

Like verification, validation is distributed across the SDLC. It naturally sits later in the cycle because you need a working system to validate against.

Development phase: Unit tests written by developers validate that individual components behave correctly under real execution. In TDD workflows, this happens before features are complete.

Integration phase: Integration testing validates that the combined components work together as designed. Interface assumptions get tested against reality.

System testing phase: The fully assembled system gets validated against overall requirements in an environment mirroring production. QA runs functional, regression, performance, and exploratory tests against the complete build.

User acceptance testing: Stakeholders or end users validate the system against their actual workflows. This is the final opportunity to catch requirement gaps before release.

Post-release: Smoke tests, monitoring, and production verification confirm deployment went cleanly and the system behaves correctly under real load. In continuous delivery, this phase feeds directly into the next development cycle.

Validation activities progress closer to reality as the SDLC advances: from isolated unit behavior to actual users in production. Each stage builds on the last. Gaps in early validation show up as expensive problems later.

Verification vs Validation: Direct Comparison

Both concepts are clear individually, but understanding how they compare directly across key dimensions helps QA teams apply them effectively. The differences are more than semantic. They have practical implications for workflow, ownership, and cost.

Static vs Dynamic Testing

Verification is static. No code execution required. You're reviewing, inspecting, analyzing artifacts: requirements documents, design specs, code, test cases.

Validation is dynamic. Software must run. You're executing tests against a live system, observing real behavior.

This fundamental difference drives every other distinction between them.

Questions They Answer

Verification checks if the product matches the specification.

Validation checks if the product solves the intended problem.

A system can meet every specification and still fail validation because the specs themselves were wrong, incomplete, or misaligned with user needs.

Timing and Overlap

Verification happens earlier and runs continuously throughout the SDLC, from requirements review through pre-release audits.

Validation happens once there's a working system and intensifies toward the end: system testing, UAT, and release.

They overlap significantly. You're often verifying the next sprint's requirements while validating this sprint's build.

Ownership

Verification is a shared responsibility. Business analysts review requirements. Architects review designs. Developers peer review code. QA engineers review test cases and traceability. Every discipline produces artifacts that need checking.

Validation is primarily owned by QA, though UAT involves stakeholders and end users, and developers own unit-level validation. The further into the cycle, the more validation shifts toward dedicated testing roles.

Environment Requirements

Verification requires no execution environment. You can verify a requirements document, design diagram, or code review in a text editor.

Validation requires a running system: at minimum, a development or staging environment, ideally one mirroring production as closely as possible.

This is a practical constraint worth planning for. Validation is environment-dependent in a way verification isn't.

Cost Dynamics

Verification is cheaper per issue found because it catches problems early, before they're built into a running system.

Validation catches what verification misses. But by the time validation surfaces a problem, more code has been written against it, more testing has been built around it, and fixing it is correspondingly more expensive.

The two aren't in competition. They're complementary cost controls at different points in the risk curve.

Verification Methods and Techniques

Verification covers a range of techniques, each suited to different artifact types and risk levels. Choosing the right method depends on what you're reviewing and how much rigor the situation requires. Formal inspections for critical requirements, lightweight reviews for routine code changes, and automated static analysis for continuous checks.

Reviews and Inspections

Formal inspections involve a small team (author, moderator, reviewers, scribe) evaluating an artifact against a checklist. Each person reviews beforehand, issues get logged during the session, and the author fixes them before moving forward.

Inspections work for high-risk artifacts: critical requirements, security-sensitive code, and test plans for complex features. The overhead is justified when the cost of a defect slipping through is high.

For lower-risk artifacts, a lighter process suffices: two-person review with async comments rather than formal meetings.

Walkthroughs

Less formal than inspections. The author leads peers through the artifact, explaining logic, decisions, and assumptions while reviewers ask questions and raise concerns in real time. No mandatory pre-review, no formal defect log, no moderator.

Walkthroughs excel at knowledge sharing as much as defect detection. Good fit for early-stage work: draft requirements, initial designs, work-in-progress test plans. The goal is to surface broad concerns and gain early alignment rather than formal sign-off.

Also useful for onboarding: walking a new team member through system design or test approach is verification and knowledge transfer simultaneously.

Desk Checking and Code Reviews

Desk checking is the lightest verification: a developer or tester manually working through their own artifact, line by line, before passing it to anyone else. Informal, unstructured, entirely self-directed.

The value is slowing down enough to actually read what you wrote rather than what you intended to write. A surprising number of obvious errors get caught this way before reaching a peer.

Code reviews are peer-facing: one or more engineers reviewing a colleague's code before merging. Most modern teams handle this through pull request reviews with inline comments.

Effective code reviews check logic correctness, standards compliance, test coverage, edge case handling, and security considerations. Not just style. The distinction between useful and superficial usually comes down to whether the reviewer traces the logic or just skims for obvious issues.

Static Analysis Tools

Static analysis automates significant code verification by analyzing the source without executing it. Checks for syntax errors, type mismatches, unreachable code, security vulnerabilities, complexity violations, and standards deviations at speeds no human can match.

Common examples: SonarQube for code quality and security, ESLint and Pylint for language-specific linting, Checkmarx or Veracode for security-focused analysis.

Most CI pipelines run static analysis automatically on every commit. Verification happens continuously rather than as scheduled review events.

The practical value: tools handle mechanical, rule-based checks so human reviewers can focus on what tools can't catch. Intent, logic, architecture decisions, and edge cases requiring domain knowledge. Static analysis and human review aren't alternatives. They cover different verification surfaces.

Validation Methods and Techniques

Validation techniques are all about executing software and observing real behavior. The method you choose determines testing depth, perspective, and the kinds of defects you're most likely to surface.

Black Box Testing

Black box testing validates software purely from the outside. The tester has no visibility into internal code structure, logic, or implementation. Define inputs, execute the system, and evaluate outputs against expected behavior. What happens in between is irrelevant.

This reflects how real users interact with software, which makes it useful for validation. You're not checking how the code is written. You're checking whether the system works as expected for the user.

Common techniques: equivalence partitioning, boundary value analysis, decision table testing, and state transition testing. Each identifies different issue types by choosing effective inputs without looking at code.

The limitation: coverage confidence. Without code visibility, you can't know whether tests exercise all paths that matter. White box testing fills that gap.

White Box Testing

White box testing validates software from the inside. The tester has full visibility into source code, internal logic, and control flow. Test cases are designed to exercise specific code paths, branches, conditions, and loops rather than just observable inputs and outputs.

Metrics like statement coverage, branch coverage, and path coverage come from here. White box testing excels at finding logic errors, unreachable code, untested edge cases, and security vulnerabilities that wouldn't be obvious from the outside.

Typically performed by developers or QA engineers with direct codebase access.

The trade-off: perspective. White box testing validates what code does, but can't tell you whether what it does is what users actually need. You can achieve 100% branch coverage and still ship a product that fails UAT.

White box and black box testing are complementary. Each surfaces defects that the other misses.

Unit Testing and Integration Testing

Unit testing validates individual components in isolation: a single function, method, or class. Execute it with controlled inputs, assert expected outputs. The scope is deliberately narrow. The goal is to confirm each unit behaves correctly on its own before combining with anything else.

Unit tests are fast, cheap to run, and give precise diagnostics when they fail. In TDD workflows, they're written before code itself, which means validation is built into development rather than layered on top. A solid unit test suite is one of the most reliable early-warning systems in a QA strategy.

Integration testing validates that the combined components work together correctly. A unit can pass all its tests but fail when integrated with dependencies. Integration testing catches interface mismatches, timing issues, and interaction bugs that unit tests miss by design.

See where integration tests and unit tests come in the testing pyramid.

System Testing and User Acceptance Testing

System testing validates the fully integrated application as a whole, in an environment resembling production as closely as possible. QA takes the broadest view: running functional tests, regression suites, performance tests, and exploratory sessions against the complete build. The goal is to confirm the system meets specified requirements end-to-end, across all integrated components, under realistic conditions.

User acceptance testing (UAT) takes validation further by involving real users or stakeholders. Final check before release. Instead of asking if the system works, UAT asks if it works for actual users in real scenarios.

Requirements that seem complete on paper often show gaps when real users interact with the system. UAT catches those gaps before the product goes live.

Verification and Validation Real-World Examples

Theory clarifies concepts, but examples make them concrete. These two scenarios show how verification and validation work in practice, one complex and one simple, both illustrating the same core distinction.

Mobile Banking Application

A team builds a mobile banking app with account balance display, fund transfers, transaction history, and push notifications.

Verification: The BA team reviews fund transfer requirements and catches an ambiguity early. The spec says transfers should be "processed within a reasonable time" without defining what that means. Gets resolved before development starts. Architects review the system design to confirm authentication layer correctly implements security requirements. Developers peer review code before merging, catching a logic error in transaction limit validation that would have allowed transfers above the user's daily cap. QA engineers review notification feature test cases and find that no test covers the scenario where a push notification fires while the app is in the background on iOS. All before a single test executes.

Validation: QA runs functional tests on transfers, balance updates, and transaction history. Performance testing ensures the app handles multiple concurrent users without slowdown. During UAT, beta users report that the transfer confirmation screen is confusing. They aren't sure if the transfers actually went through. The requirement said to "display a confirmation screen," and it exists. But validation showed that technical correctness wasn't enough for real users.

Submit Button Functionality

A form with a submit button. Requirement states: "The submit button shall be disabled until all mandatory fields are completed and input passes format validation."

Verification: QA reviews the requirement and flags that "format validation" is undefined. Unclear whether email format, phone number format, or both are in scope. Gets clarified before development. The developer's code is reviewed, and the reviewer notices disabled state logic checks and field completion, but doesn't hook into the format validation function yet. It's stubbed with a placeholder. Caught and resolved before code reaches the test environment.

Validation: Feature is built and deployed to test. QA executes a test with all fields completed, but with an invalid email format. The submit button is enabled anyway, allowing form submission with bad data. That's a defect. Another test fills all fields correctly but uses a phone number with spaces rather than dashes. The button stays disabled when it should be enabled. Another defect. Both are impossible to find through verification alone because they only appear when the code actually runs.

How Verification and Validation Work Together

Verification and validation aren't separate disciplines in well-run QA. They reinforce each other continuously.

Verification feeds validation. The quality of your validation is directly determined by how well verification was done upstream. Unreviewed requirements produce flawed test cases. Unverified test cases mean your validation phase runs the wrong tests against the right system. The connection is direct.

It runs the other way, too. Validation findings feed back into verification. When UAT surfaces a defect tracing back to a requirement gap, that's a signal about where the verification process broke down. Good QA teams use those signals to sharpen review checklists and tighten upstream processes.

The two activities run in parallel for most of a project's life: validating this sprint's build while verifying the next sprint's requirements. Rarely a clean handoff. Balance shifts toward validation as projects mature, but both are always in play.

Verification without validation is theory without proof. Validation without verification is execution without direction. Together they form a feedback loop that catches defects early, builds confidence progressively, and gives teams a solid basis for saying software is ready to ship.

Benefits of Disciplined Verification and Validation

When verification and validation are treated as first-class activities rather than box-ticking exercises, the impact shows up across the entire delivery process. These benefits compound over time, making the difference between teams that ship reliably and teams that firefight constantly.

Early Detection, Lower Costs

The earlier a defect is caught, the cheaper it is to fix. A requirement ambiguity resolved in review costs an hour. The same ambiguity caught in UAT costs a rework cycle, a regression run, and a delayed release. Studies consistently put the cost of fixing a production defect at 10 to 100 times the cost of catching it in requirements.

Verification catches defects before they're built in. Validation catches them before they ship. Both are significantly cheaper than finding them in production.

Better Product Quality

Thorough verification means building against clear, testable, well-understood requirements. Thorough validation means testing the finished product against real user behavior, not just documented specs.

The combination produces software that works correctly and actually meets user needs. The only definition of quality that matters. Teams that skip either activity tend to ship products that technically pass their own tests but consistently frustrate the people using them.

Aligned Teams

Verification activities like requirements reviews, design inspections, and test case walkthroughs bring different teams together early. Developers, QA, product, and BAs spot misalignments before they become bigger problems.

That shared understanding carries into validation, where everyone is aligned on what software should do and how success is measured.

Reduced Project Risk

A significant proportion of project failures trace back to requirement defects: ambiguous specs, missing acceptance criteria, conflicting stakeholder expectations that were never caught because verification wasn't taken seriously. By the time those defects surface in validation, the schedule and budget impact is severe.

Disciplined verification dramatically reduces the likelihood of late-stage surprises. One of the most effective risk management tools available to QA teams.

Compliance and Auditability

In regulated industries (healthcare, finance, aerospace, automotive), verification and validation aren't optional. They're mandated by standards like ISO 9001, IEC 62304, and FDA 21 CFR Part 11. These standards require documented evidence that both activities were performed, and that the software meets specified requirements and intended use.

Even outside heavily regulated industries, a mature verification and validation process produces the audit trail and documented test evidence that supports compliance reviews, security assessments, and enterprise procurement.

Verification and Validation Best Practices

Having the right techniques is only half the equation. How you run verification and validation matters just as much as which activities you perform. These practices separate teams that execute verification and validation effectively from teams that treat it as bureaucratic overhead.

Plan Test Strategy Early

Verification and validation activities planned after development starts are already behind. The test strategy should be drafted during the requirements phase, before a line of code is written.

Define what will be verified and when, what validation activities are planned for each phase, what environments are needed, what entry and exit criteria look like, and who owns what. Early planning surfaces resource and tooling gaps before they become schedule problems.

The later a test strategy is written, the more it documents what happened rather than guiding what should happen.

Ensure Comprehensive Coverage

Gaps in verification show up as defects during validation. Gaps in validation show up as issues in production.

Strong coverage means linking requirements to test cases, tracking what code is tested, and ensuring each development stage includes proper checks. A requirements traceability matrix connects requirements to design, code, and tests, giving clear visibility into what's covered and what isn't.

Document Requirements, Tests, and Results

Documentation makes verification and validation repeatable and defensible. Requirements need to be written precisely enough to be testable. Test cases need to be written clearly enough that two different engineers execute them identically and get the same result. Test results need enough detail to support root cause analysis when something fails.

The discipline of documenting properly also improves activity quality. Writing a test case precisely forces you to think through edge cases you might have glossed over mentally.

Track Changes, Update Test Cases

Requirements change. Designs evolve. Code gets refactored. Every change is a potential source of test coverage drift, where tests no longer reflect what the software is supposed to do.

A change management process that automatically triggers review of affected test cases is risk control, not overhead. Test cases that aren't maintained become liabilities. They either pass when they shouldn't (because expected behavior changed and nobody updated the assertion) or fail for the wrong reasons, generating noise that erodes confidence in the test suite.

Foster QA-Dev Collaboration

The most effective verification and validation happen when QA is involved from the start, not just at the end. When QA reviews requirements early, they catch issues sooner. Developers who understand the test approach write code that's easier to validate. When quality is shared across the team, outcomes improve.

Regular discussions between QA and development aren't just meetings. They're part of the verification process.

Automate Strategically

Automation scales both verification and validation without adding effort. Static analysis handles much of the code verification. Automated tests make it possible to validate the system on every build.

But automation is only useful if it tests the right things. Fast, poorly designed tests don't add value.

Focus automation on repetitive tasks: regression suites, smoke tests, API checks. Leave exploratory testing, UAT, and complex scenarios to humans, where judgment matters more than speed.

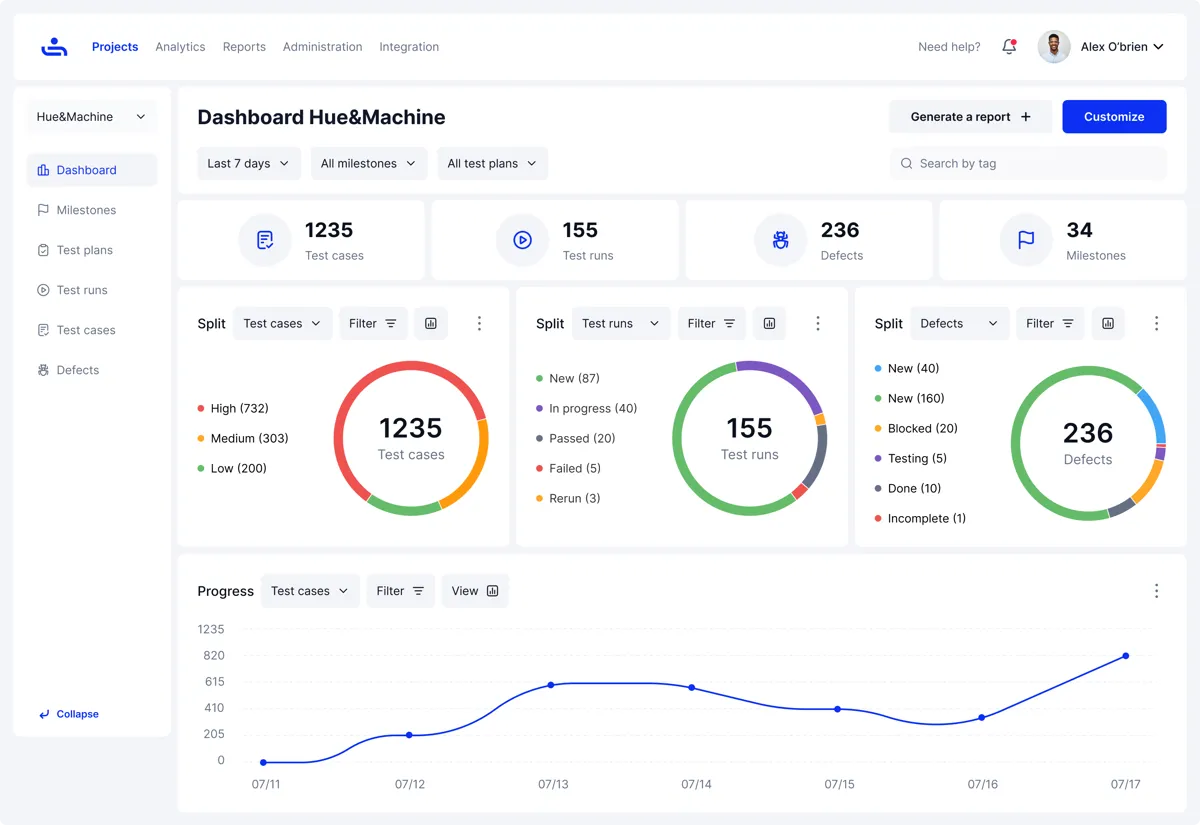

How TestFiesta Streamlines Verification and Validation Workflows

Managing verification and validation across the full SDLC means juggling many moving parts: requirements documents, test cases, traceability matrices, defect logs, team handoffs, and audit trails. TestFiesta consolidates all of that into one platform, so nothing falls through the gaps between tools and phases. The result is visibility, traceability, and control without the overhead of manually synchronizing disconnected systems.

Unified Platform for Static and Dynamic Testing

Most teams stitch together separate tools for verification and validation: one for document reviews, another for test case management, another for defect tracking. The overhead of keeping tools in sync is real. The gaps between them are where coverage drift and communication failures live.

TestFiesta consolidates both static and dynamic testing workflows into a single platform. QA engineers get visibility across verification and validation activities without switching context or reconciling data across multiple systems.

Seamless Requirements Traceability

Traceability is one of the most critical and most commonly neglected aspects of mature verification and validation. TestFiesta maintains live traceability links between requirements, test cases, and test results. Coverage gaps are visible in real time rather than discovered during pre-release audits.

When a requirement changes, affected test cases are immediately identifiable. When a test fails, the requirement it maps to is right there. That end-to-end visibility separates QA processes that can answer "are we covered?" with confidence from ones that can only guess.

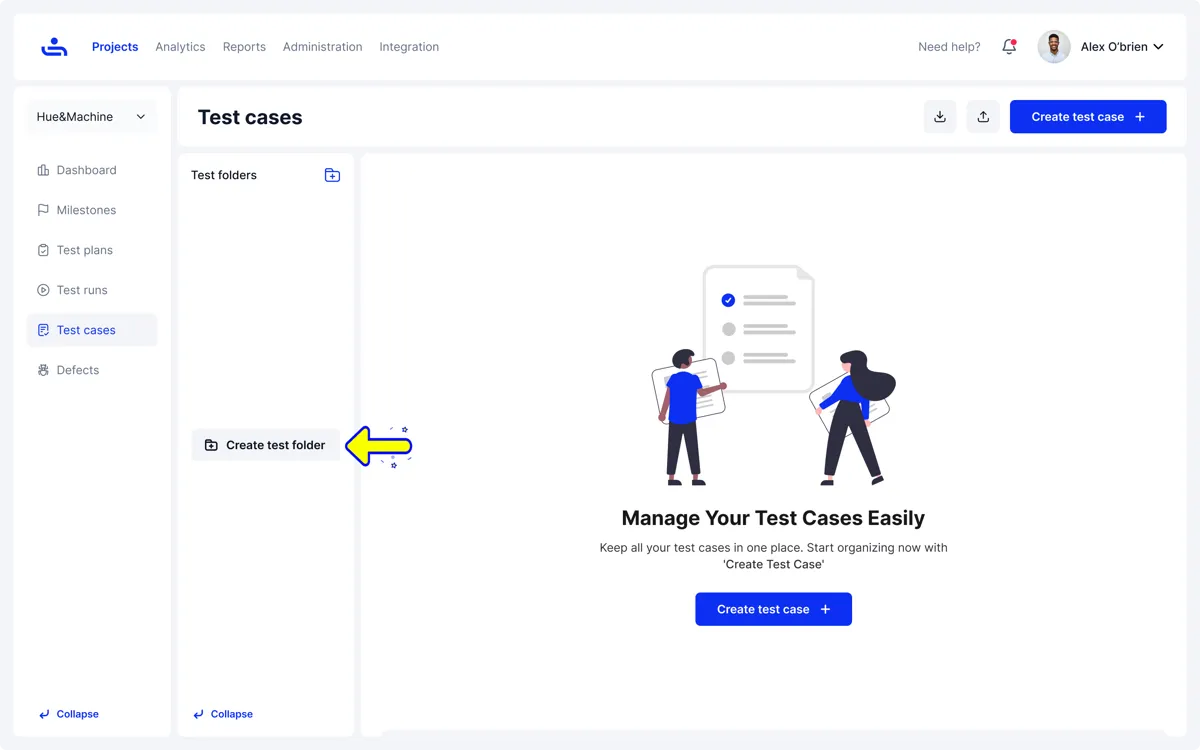

Test Case Management for Both Activities

TestFiesta's test case management supports both verification and validation workflows, not just execution tracking. Teams can create, review, and sign off on test cases before execution begins, building verification into test planning rather than treating it as optional.

During validation, test execution is tracked against reviewed cases with full result logging. Straightforward to produce the documented evidence that compliance reviews and post-release retrospectives require.

Real-Time Collaboration

Verification and validation rely on input from multiple teams. Requirements reviews, UAT, and defect triage need developers, QA, and stakeholders to be aligned.

TestFiesta keeps conversations tied to the work. Comments, reviews, and approvals live directly on requirements, test cases, and defects instead of being scattered across emails or chats. This reduces coordination overhead and prevents things from slipping through cracks.

Conclusion

Verification and validation answer different questions, operate at different SDLC points, and catch fundamentally different classes of defects. Used together, they give QA teams the best chance of shipping software that is both built correctly and solves the right problem.

Invest in verification early. Review requirements before code is written, inspect test cases before they're run, and treat every phase handoff as an opportunity to catch problems at their cheapest point, then validate thoroughly. Execute against realistic environments, get real users involved in UAT, and use validation findings to sharpen upstream processes.

Neither works well in isolation. A team that verifies rigorously but validates superficially ships technically compliant software that frustrates users. A team that validates extensively but skips verification spends sprint after sprint fixing defects that a one-hour requirements review would have caught.

Getting verification and validation right is about catching the right problems at the right time and building the kind of confidence in releases that lets teams ship without crossing their fingers.

Frequently Asked Questions

What metrics should we track to measure verification and validation effectiveness?

Track defect escape rate (defects found in production vs earlier stages), cost per defect by phase (to quantify the value of early detection), requirements coverage percentage (what's tested vs what's specified), and mean time to defect detection. Also monitor verification activity completion rates, code review turnaround times, and UAT defect density. These testing metrics together show whether your verification and validation process is actually reducing risk or just generating busy work.

How do we convince stakeholders to invest time in verification activities?

Frame it in cost terms. Present historical data showing the cost differential between a defect caught in requirements review versus one caught in UAT or production. A single production defect typically costs 10-100x more to fix than the same issue caught in verification. Calculate the ROI: if your team spends 2 hours per week on requirements reviews and catches 3 issues that would have cost 5 days each to fix in UAT, you've saved 13 days of work. Most stakeholders respond to that math.

What's the minimum viable verification and validation process for a small team with limited resources?

Start with these three non-negotiables: peer review of requirements before development begins (catches 60% of downstream defects), mandatory code review before merge (catches logic errors cheaply), and at least one full end-to-end test of critical user paths before release (validates the system actually works). Add a simple traceability spreadsheet linking requirements to tests. This basic process takes maybe 10% more time upfront, but typically cuts total rework time by 40-50%.

How do we handle verification and validation in continuous delivery pipelines where releases happen daily?

Automate what you can: static analysis in CI, unit and integration tests on every commit, smoke tests post-deployment. For verification, shift activities left: build review gates into your definition of ready, not your definition of done. For validation, use feature flags to release incrementally to subsets of users, essentially turning production into a continuous UAT environment. The principles don't change; the cadence compresses into shorter feedback loops.